The following procedure is for CTERA Portal 8.3.3000.x. and not 8.3.3300.x. For CTERA Portal, you must add a second NIC to each portal server you deploy. The second NIC does not need to be public.

Use the following workflow to install CTERA Portal.

- Creating a Portal Instance using a CTERA Portal image obtainable from CTERA support.

- Configuring Network Settings.

- Optionally, if it is not set correctly, configure a default gateway.

- Additional Installation Instructions for Customers Without Internet Access.

- For the first server you install, follow the steps in Configuring the Primary Server.

- For any additional servers beside the primary server, install the server as described below and configure it as an additional server, as described in Installing and Configuring Additional CTERA Portal Servers.

- Make sure that you replicate the database, as described in Configuring the CTERA Portal Database for Backup.

- Backup the server as described in Backing Up the CTERA Portal Servers and Storage.

You can use block-storage-level snapshots for backup, but snapshots are periodical in nature, configured to run every few hours. Therefore, you cannot recover the metadata to any point-in-time, and can lose a significant amount of data on failure. Also, many storage systems do not support block-level snapshots and replication, or do not do so efficiently.

Creating a Portal Instance

You can install the CTERA Portal on Red Hat OpenShift console. The installation requires the CTERA Portal qcow2 image, that you get from CTERA support and save to the client computer.

To install the CTERA Portal Server:

- Log in to the Red Hat OpenShift console.

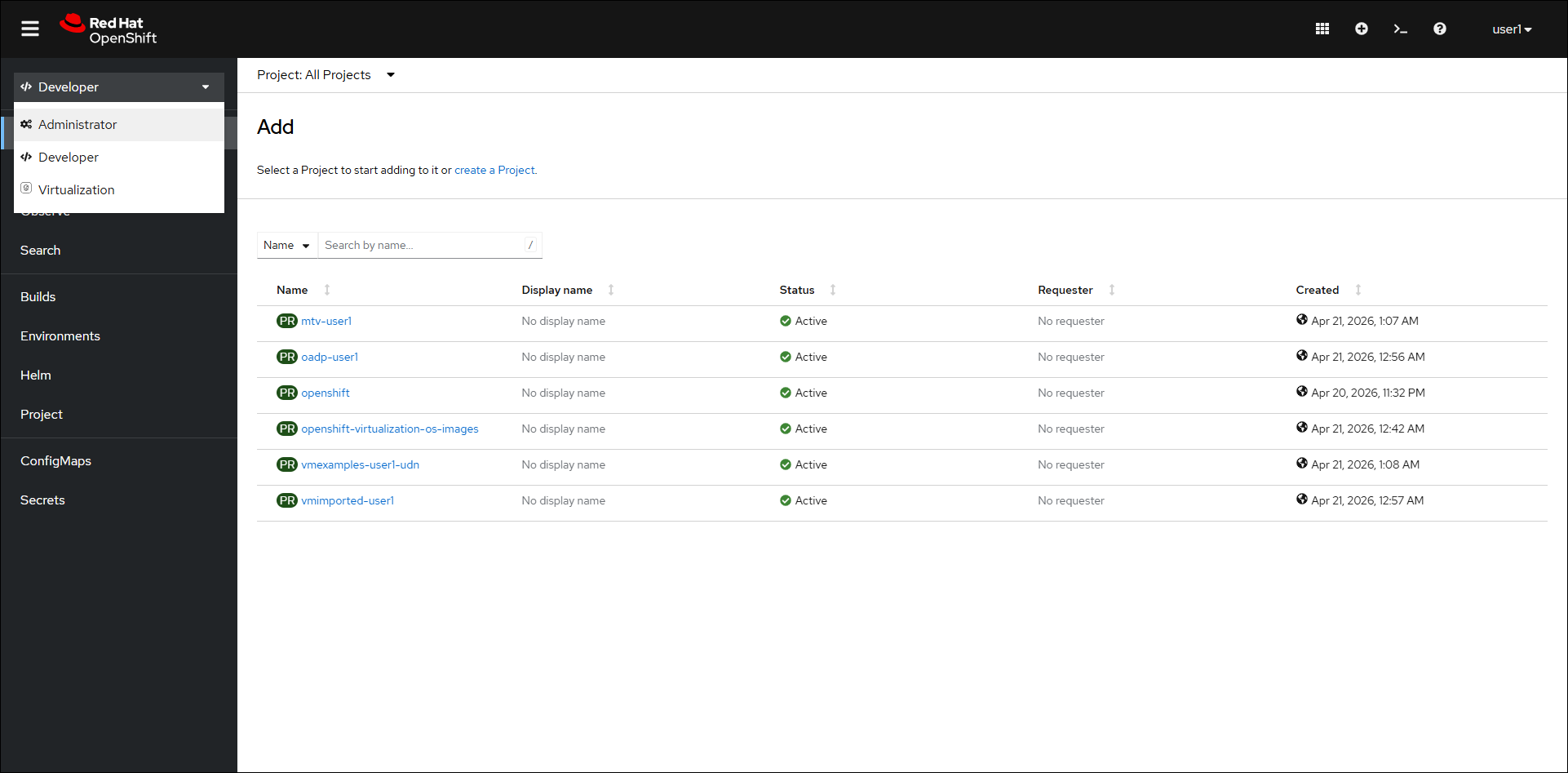

- If necessary, change the view to Administrator, in the top left drop-down.

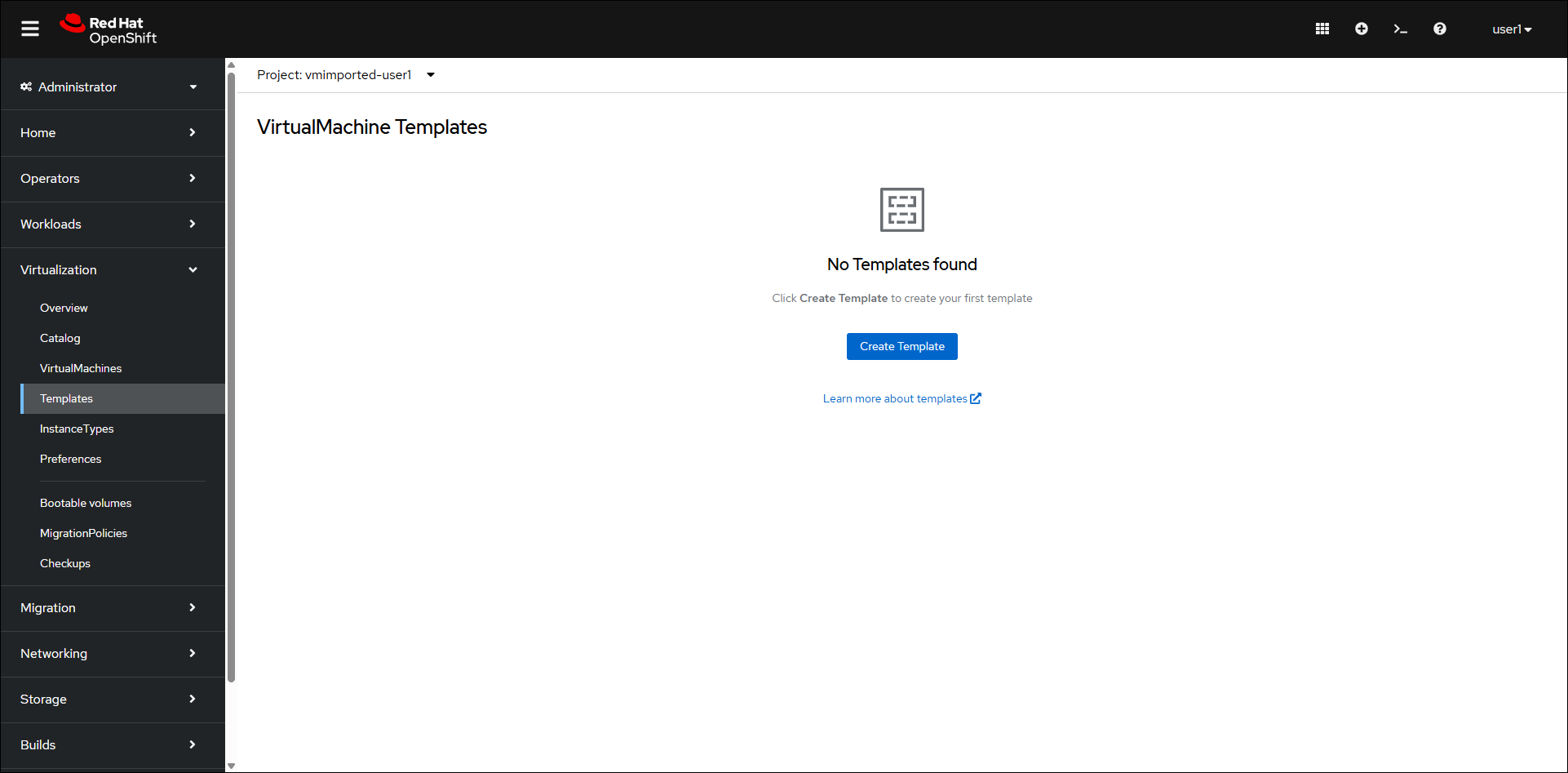

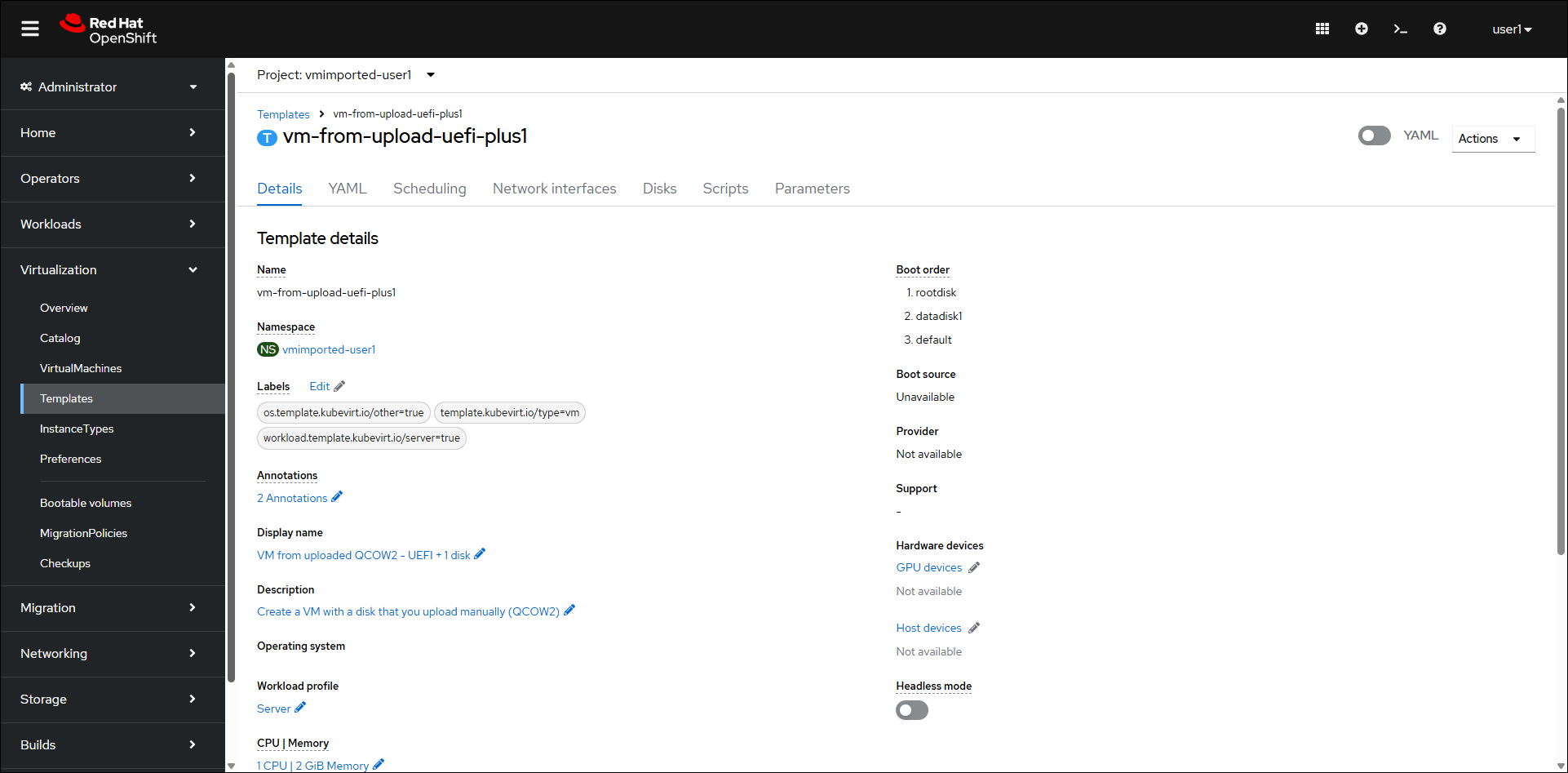

The Projects page is displayed. - Click Virtualization > Templates in the navigation pane.

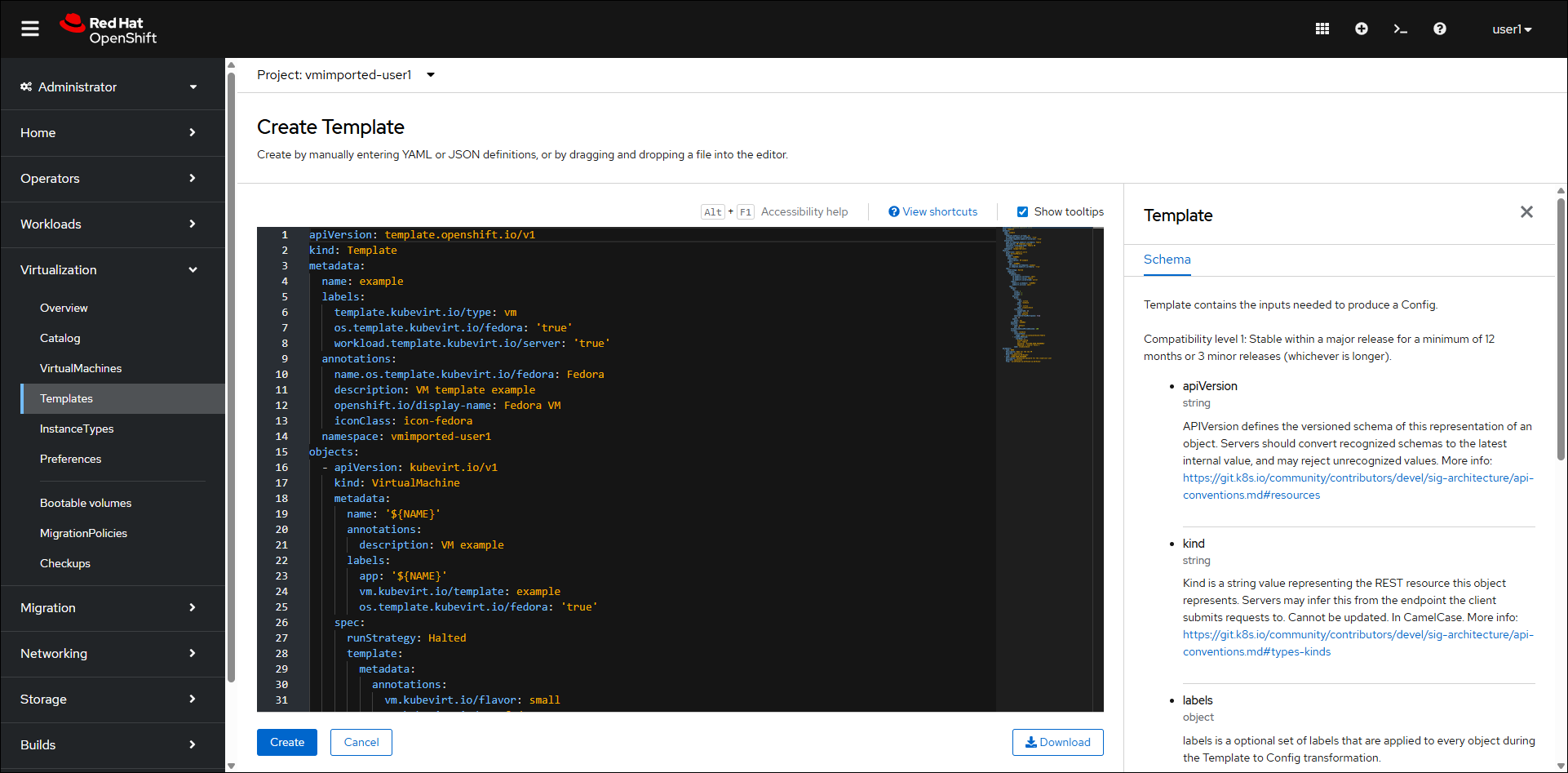

If the VM from uploaded QCOW2 - UEFI + 1 disk is displayed, continue with step 4, otherwise:- Click Create Template.

- Replace the code with the following:

kind: Template apiVersion: template.openshift.io/v1 metadata: name: vm-from-upload-uefi-plus1 namespace: vmimported-user1 uid: 21ddfbbf-1b4b-47eb-b9c6-d17c114946ba resourceVersion: '2149121' creationTimestamp: '2026-03-23T06:33:18Z' labels: os.template.kubevirt.io/other: 'true' template.kubevirt.io/type: vm workload.template.kubevirt.io/server: 'true' annotations: description: Create a VM with a disk that you upload manually (QCOW2) openshift.io/display-name: VM from uploaded QCOW2 - UEFI + 1 disk managedFields: - manager: Mozilla operation: Update apiVersion: template.openshift.io/v1 time: '2026-03-23T06:33:18Z' fieldsType: FieldsV1 fieldsV1: 'f:metadata': 'f:annotations': .: {} 'f:description': {} 'f:openshift.io/display-name': {} 'f:labels': .: {} 'f:os.template.kubevirt.io/other': {} 'f:template.kubevirt.io/type': {} 'f:workload.template.kubevirt.io/server': {} 'f:objects': {} 'f:parameters': {} objects: - apiVersion: kubevirt.io/v1 kind: VirtualMachine metadata: name: '${NAME}' labels: app: '${NAME}' spec: running: false dataVolumeTemplates: - apiVersion: cdi.kubevirt.io/v1beta1 kind: DataVolume metadata: name: '${NAME}-rootdisk' spec: source: upload: {} storage: resources: requests: storage: '${ROOTDISK_SIZE}' - apiVersion: cdi.kubevirt.io/v1beta1 kind: DataVolume metadata: name: '${NAME}-datadisk1' spec: source: blank: {} storage: resources: requests: storage: '${EXTRA1_SIZE}' template: metadata: annotations: vm.kubevirt.io/flavor: small vm.kubevirt.io/os: centos9 vm.kubevirt.io/workload: server labels: kubevirt.io/domain: '${NAME}' kubevirt.io/size: small spec: architecture: amd64 domain: cpu: cores: 1 sockets: 1 threads: 1 devices: disks: - name: rootdisk disk: bus: virtio - name: datadisk1 disk: bus: virtio interfaces: - name: default masquerade: {} model: virtio networkInterfaceMultiqueue: true rng: {} features: acpi: {} smm: enabled: true firmware: bootloader: efi: secureBoot: false machine: type: q35 resources: requests: memory: 2Gi evictionStrategy: LiveMigrate networks: - name: default pod: {} terminationGracePeriodSeconds: 180 volumes: - name: rootdisk dataVolume: name: '${NAME}-rootdisk' - name: datadisk1 dataVolume: name: '${NAME}-datadisk1' parameters: - name: NAME description: VM name value: CTERA required: true - name: ROOTDISK_SIZE description: Must be >= qcow2 virtual size value: 32Gi - name: EXTRA1_SIZE description: Extra disk size value: 250Gi - Click Create.

- Click Create Template.

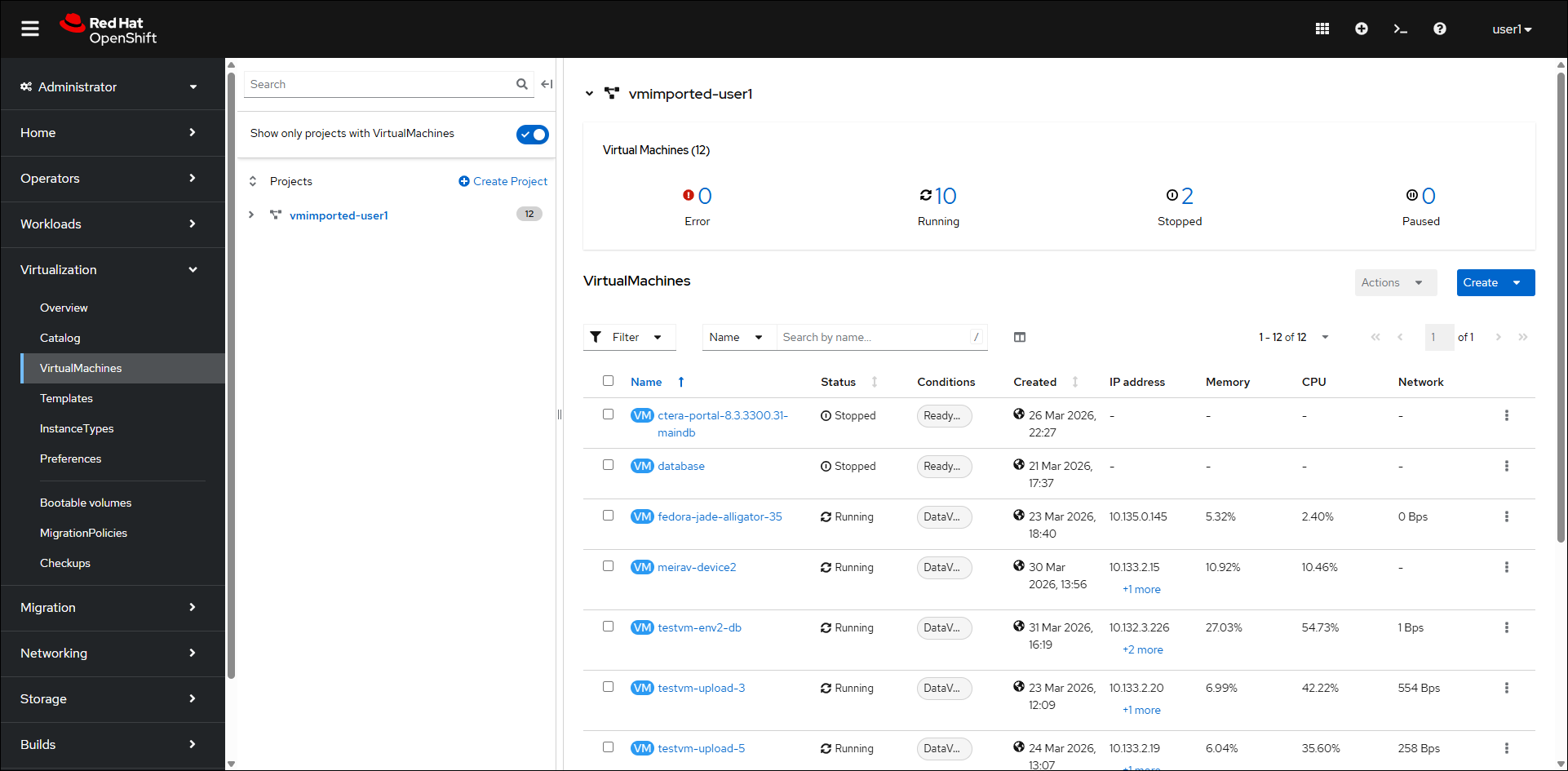

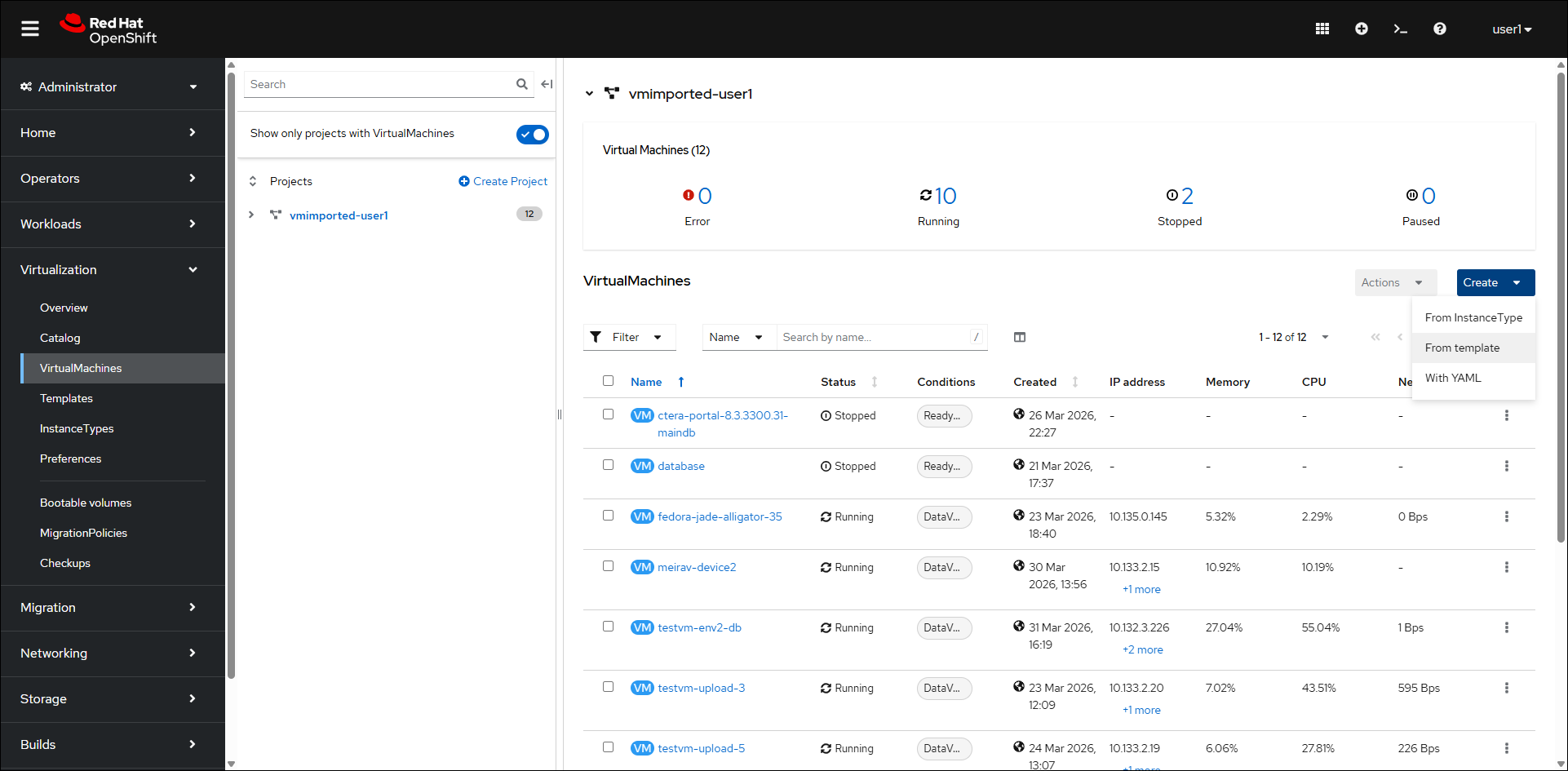

- Click Virtualization > VirtualMachines in the navigation pane.

- Click Create > From template.

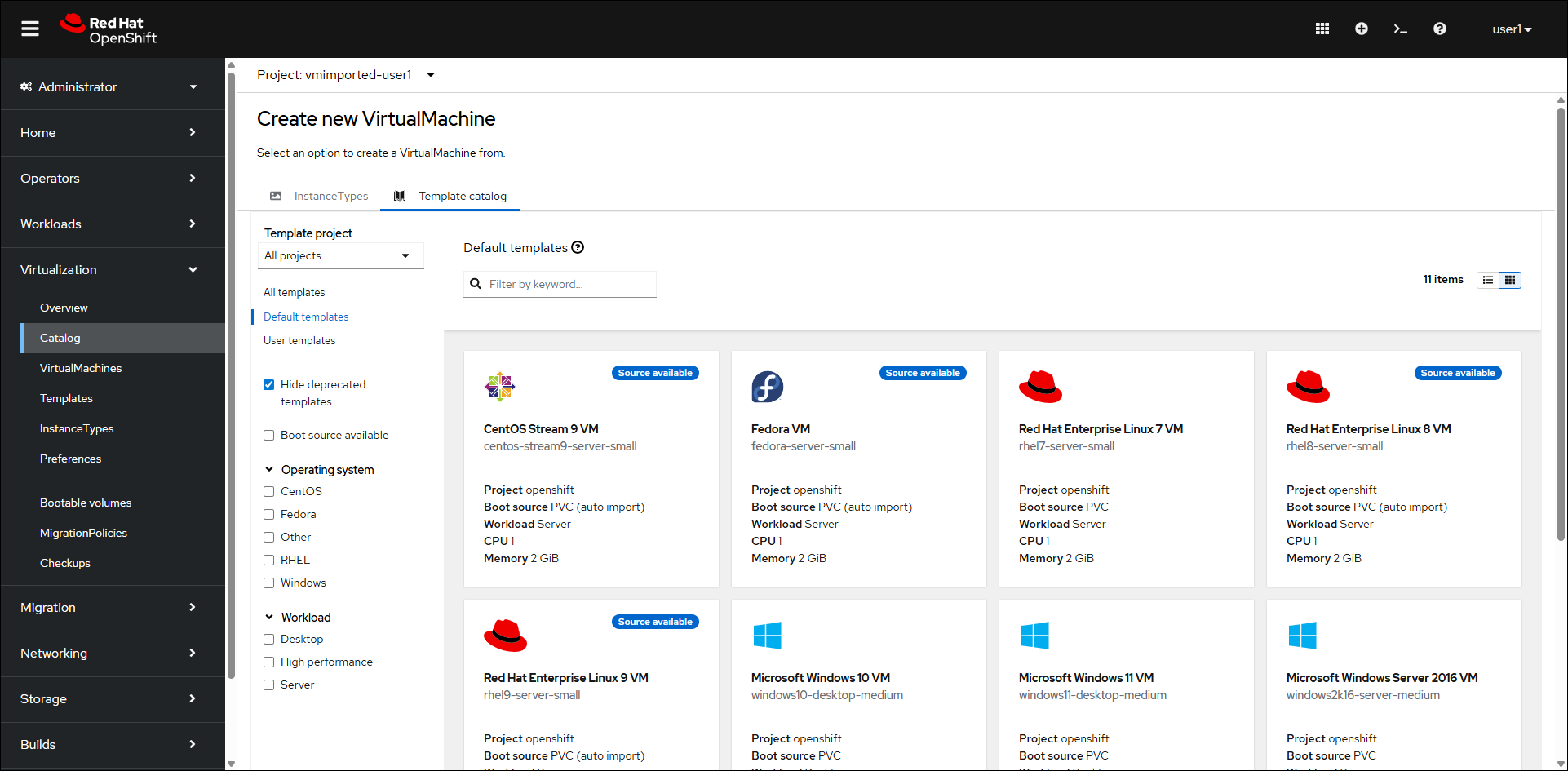

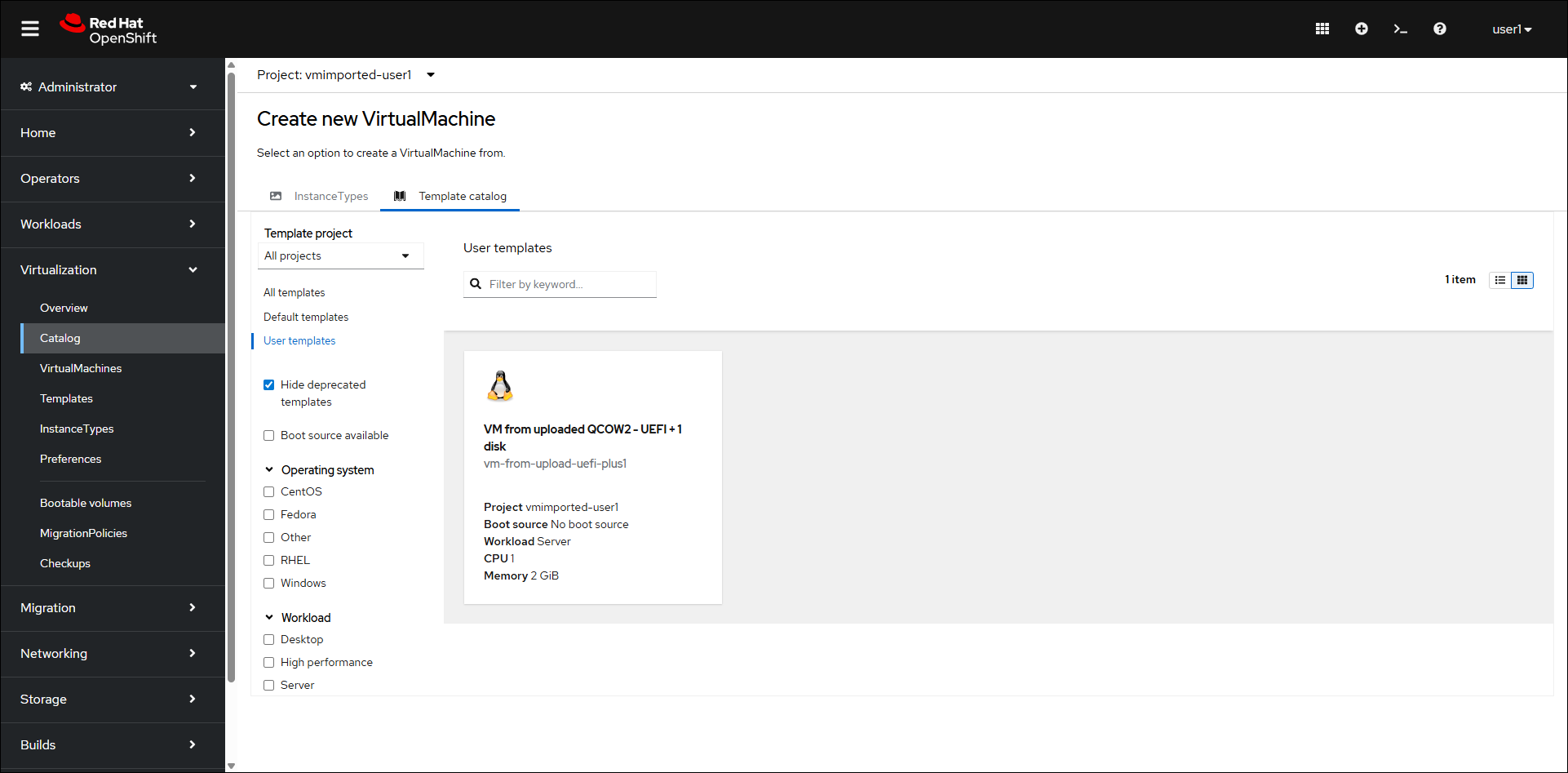

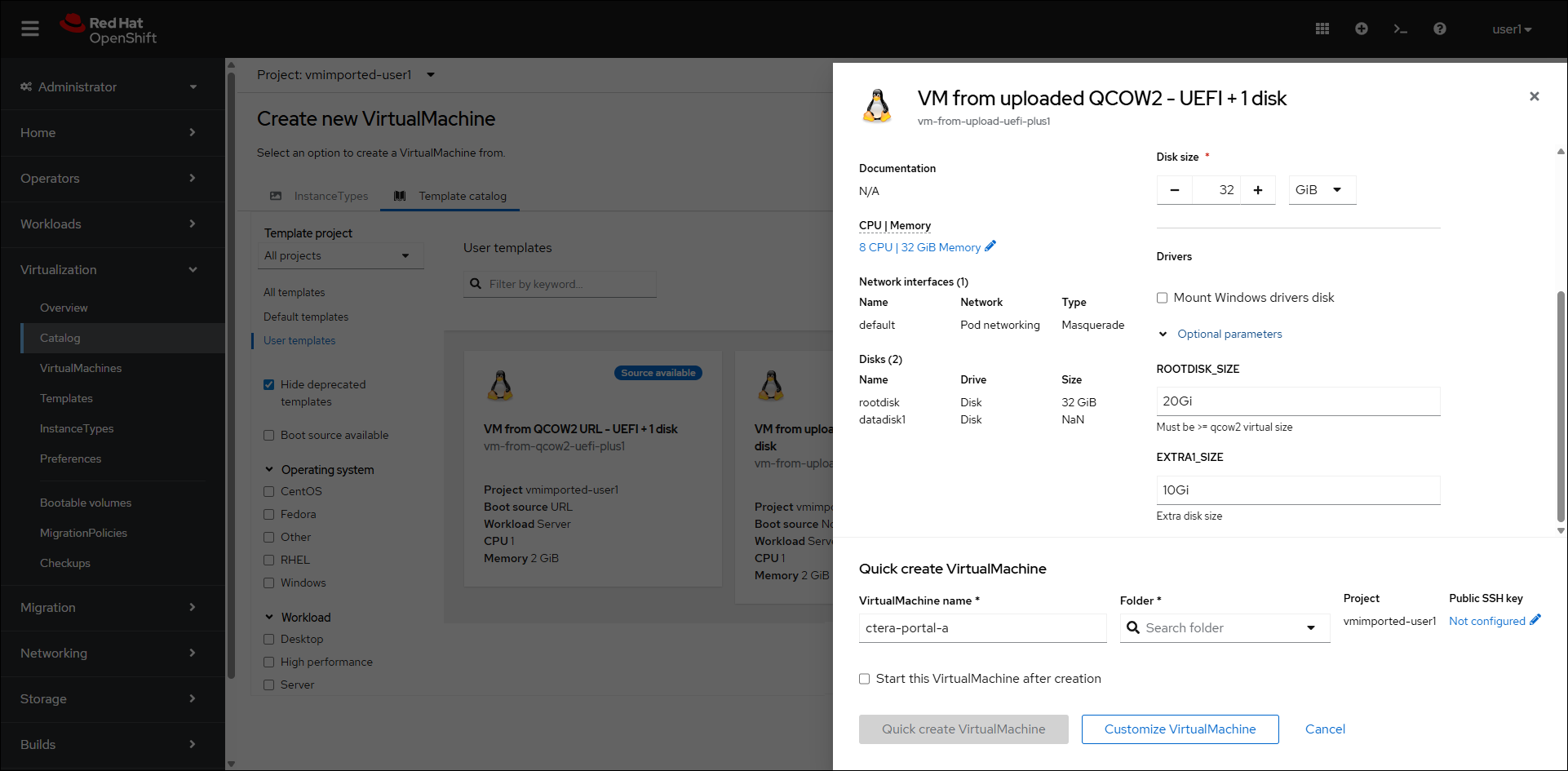

The Create new VirtualMachine page is displayed.

- Click User templates.

- Click the VM from uploaded QCOW2 - UEFI + 1 disk option.

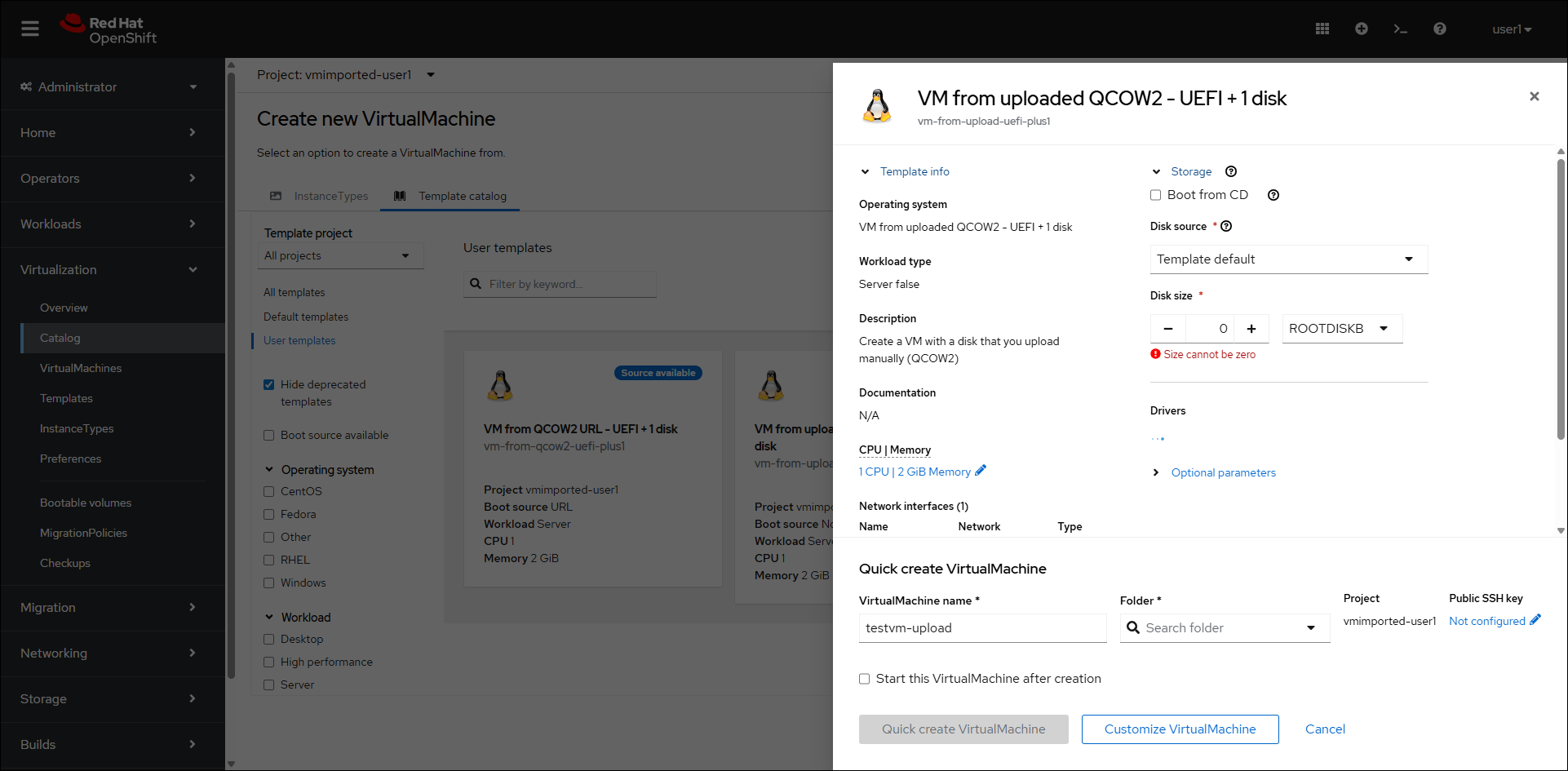

The VM from uploaded QCOW2 - UEFI + 1 disk blade is displayed.

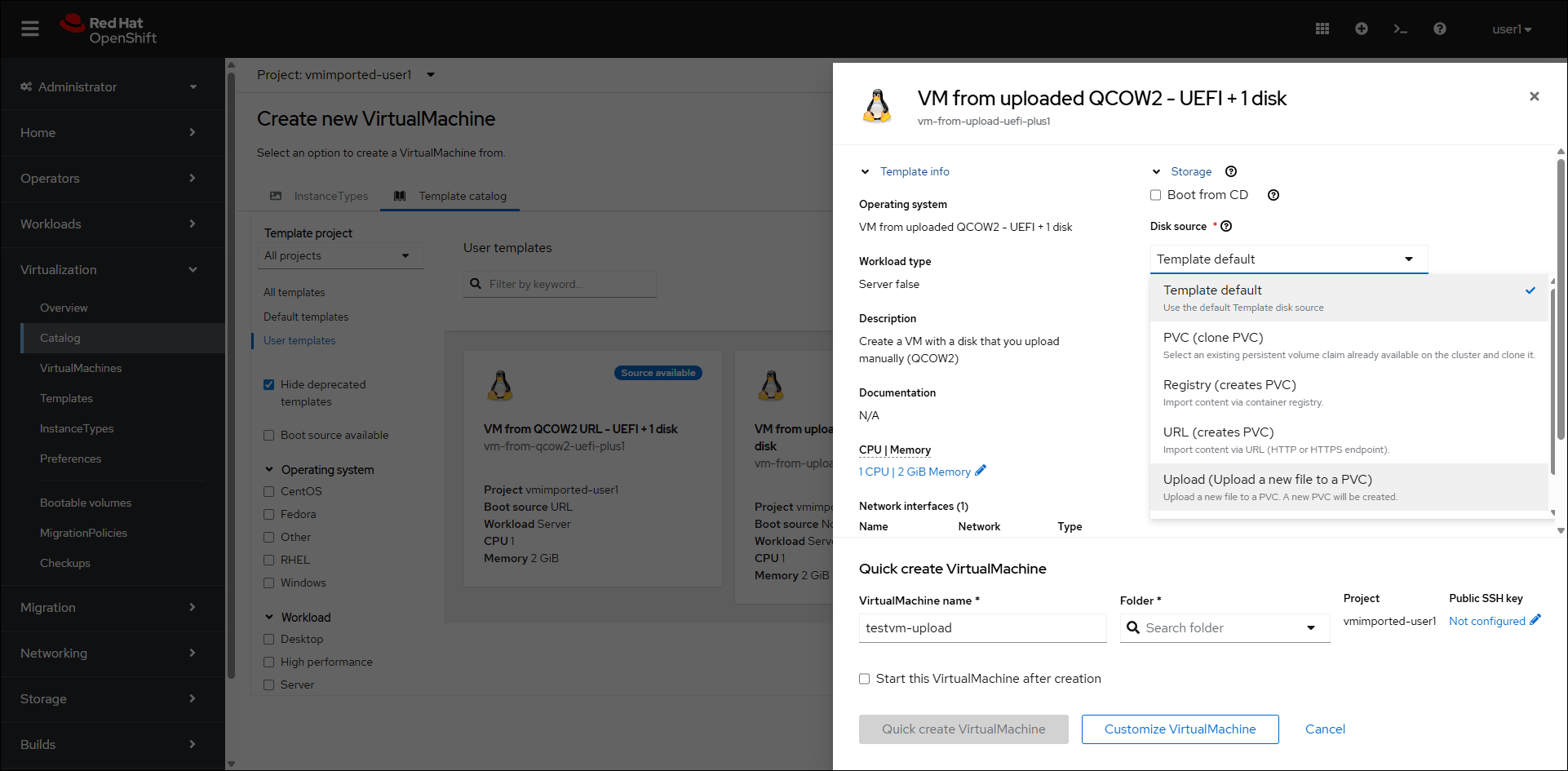

- For Disk source select Upload (Upload a new file to a PVC).

- Browse to the CTERA Portal qcow2 image file,

Portal_Image_<version>.qcow2where version is the CTERA Portal version and select it. - Set the Disk size to

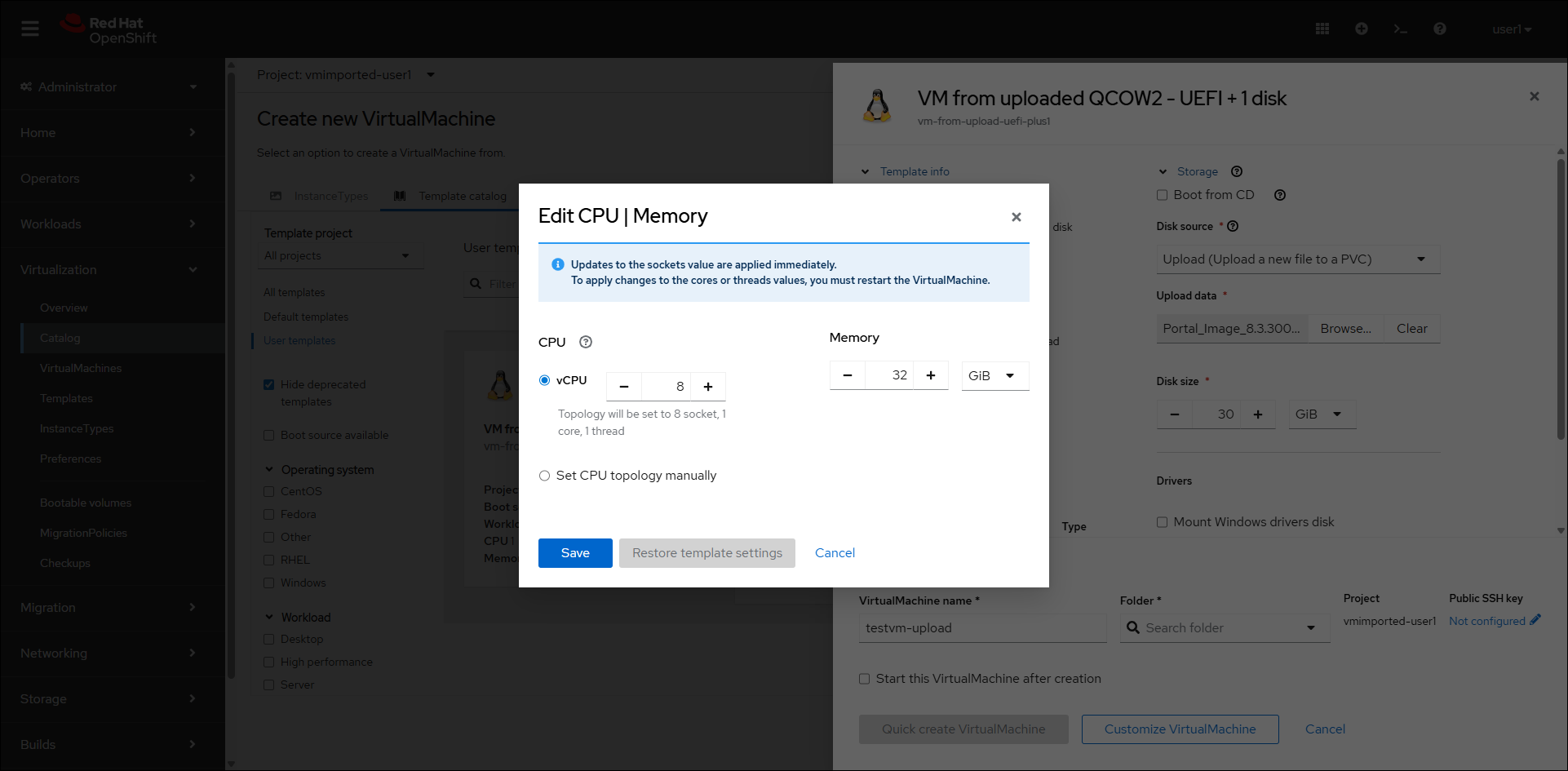

32Gib. - Click CPU | Memory and set 8 vCPUs and 32GiB memory.

- Click Saveand then click Optional parameters in the VM from uploaded QCOW2 - UEFI + 1 disk blade.

- Change the ROOTDISK_SIZE to

32Giand the EXTRA1_SIZE to 1% of the expected global file system size or a minimum of 200Gi. - Enter a name for the portal server in the VirtualMachine name field In the VM from uploaded QCOW2 - UEFI + 1 disk blade. The name must be only lower case alphanumeric characters, '-' or '.', and must start and end with an alphanumeric character.

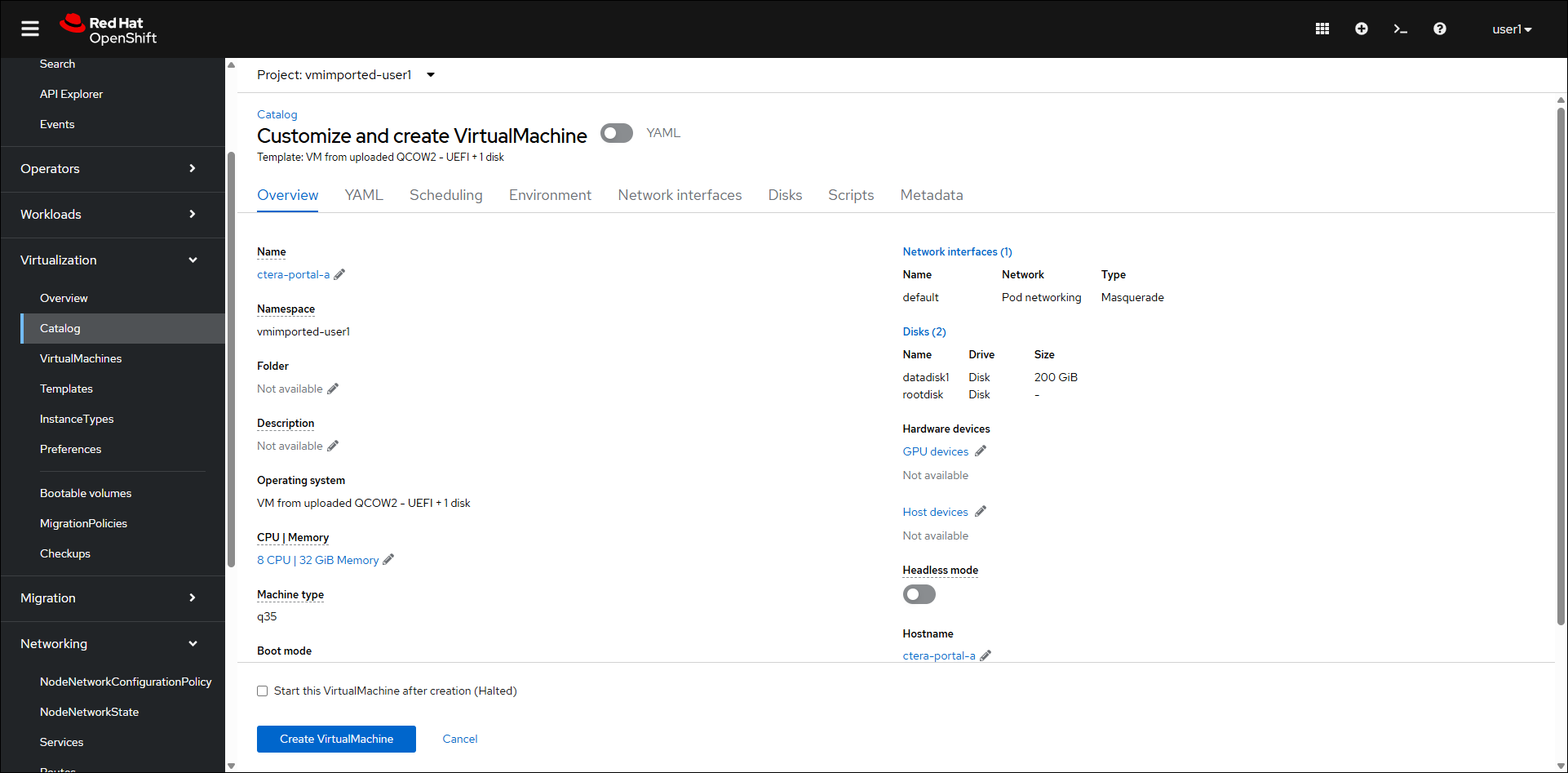

- Click Customize VirtualMachine.

The upload of the qcow2 image starts. - When the upload finishes, click Create VirtualMachine.

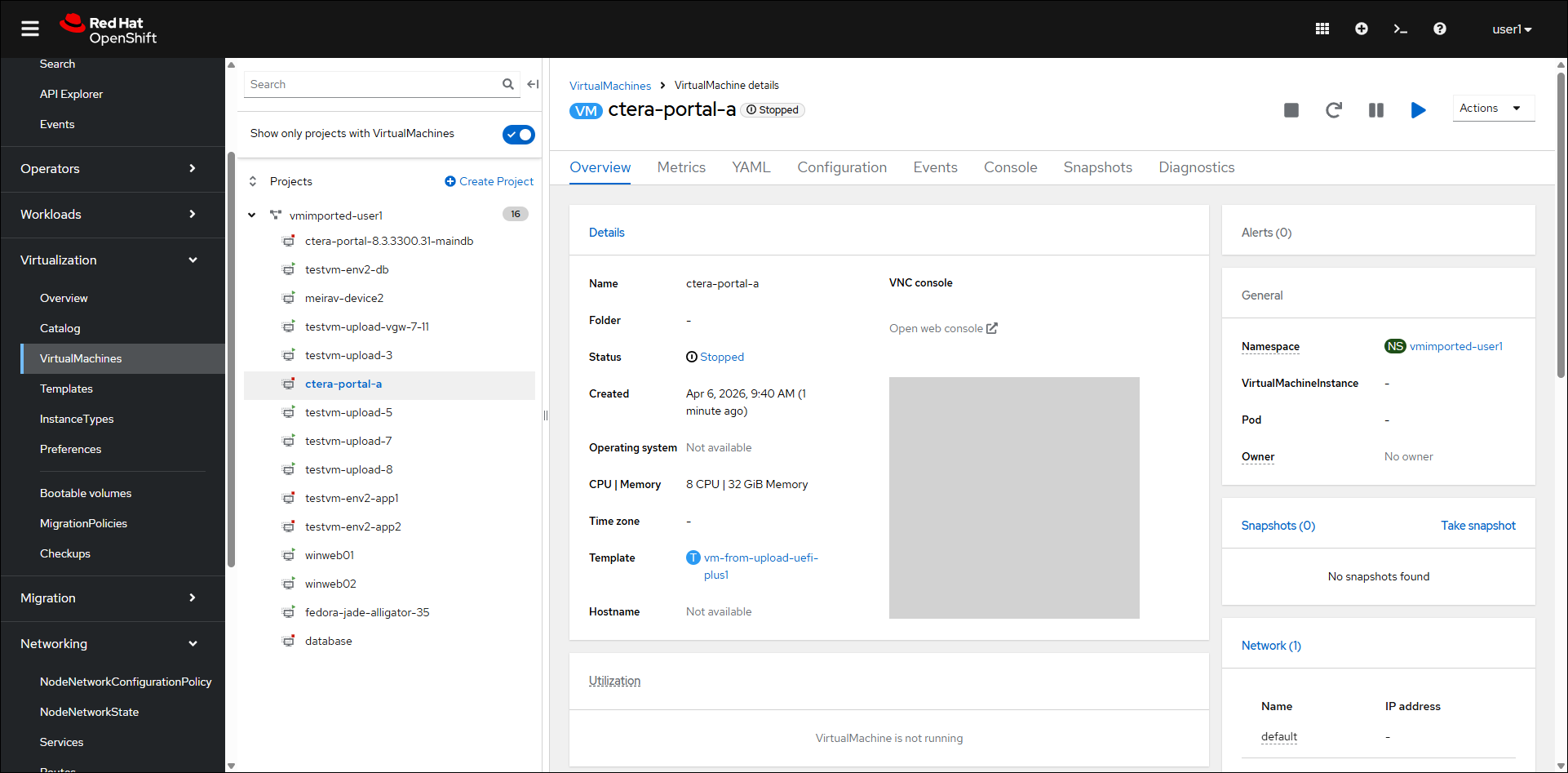

The virtual machine is created.

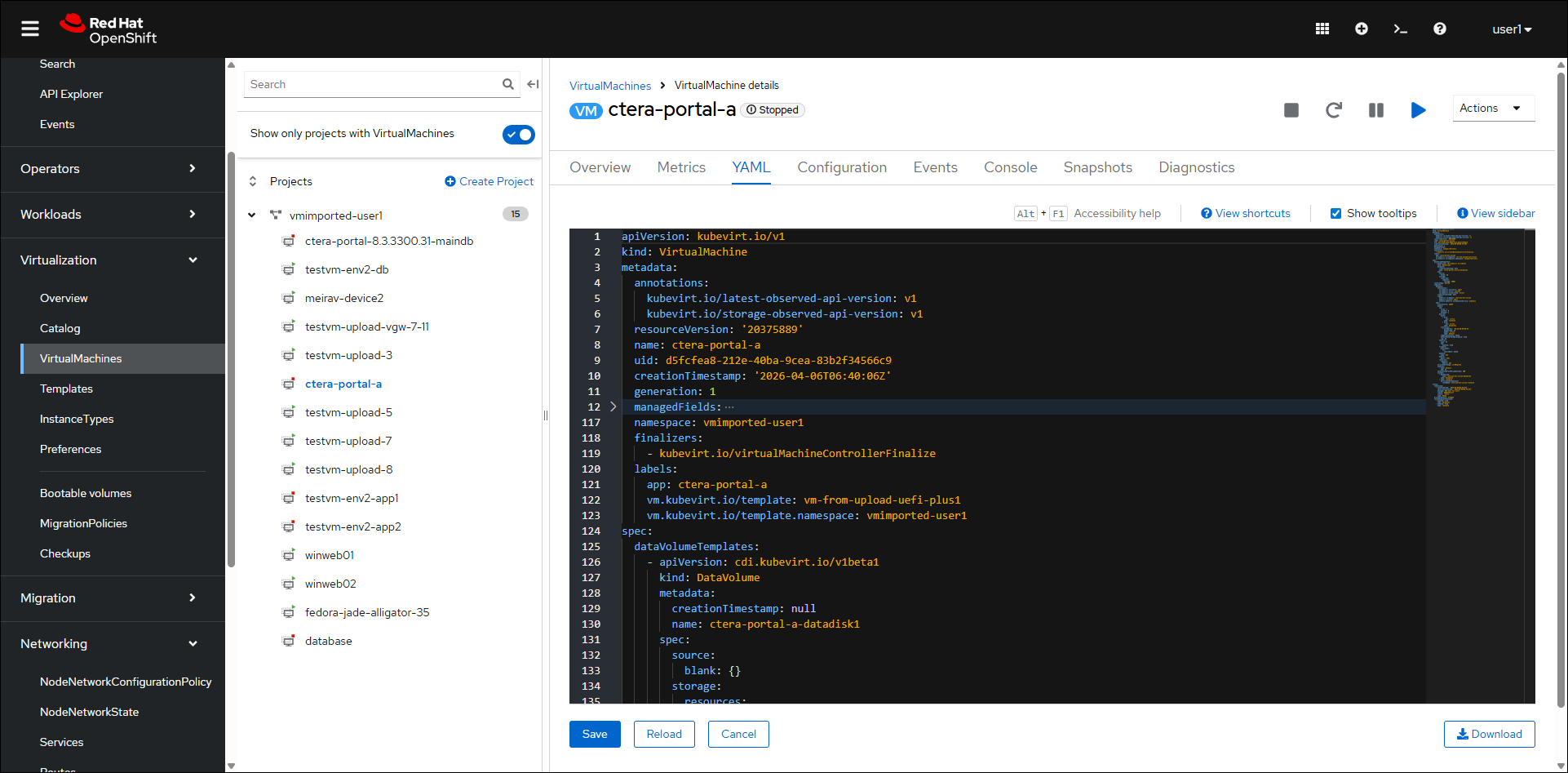

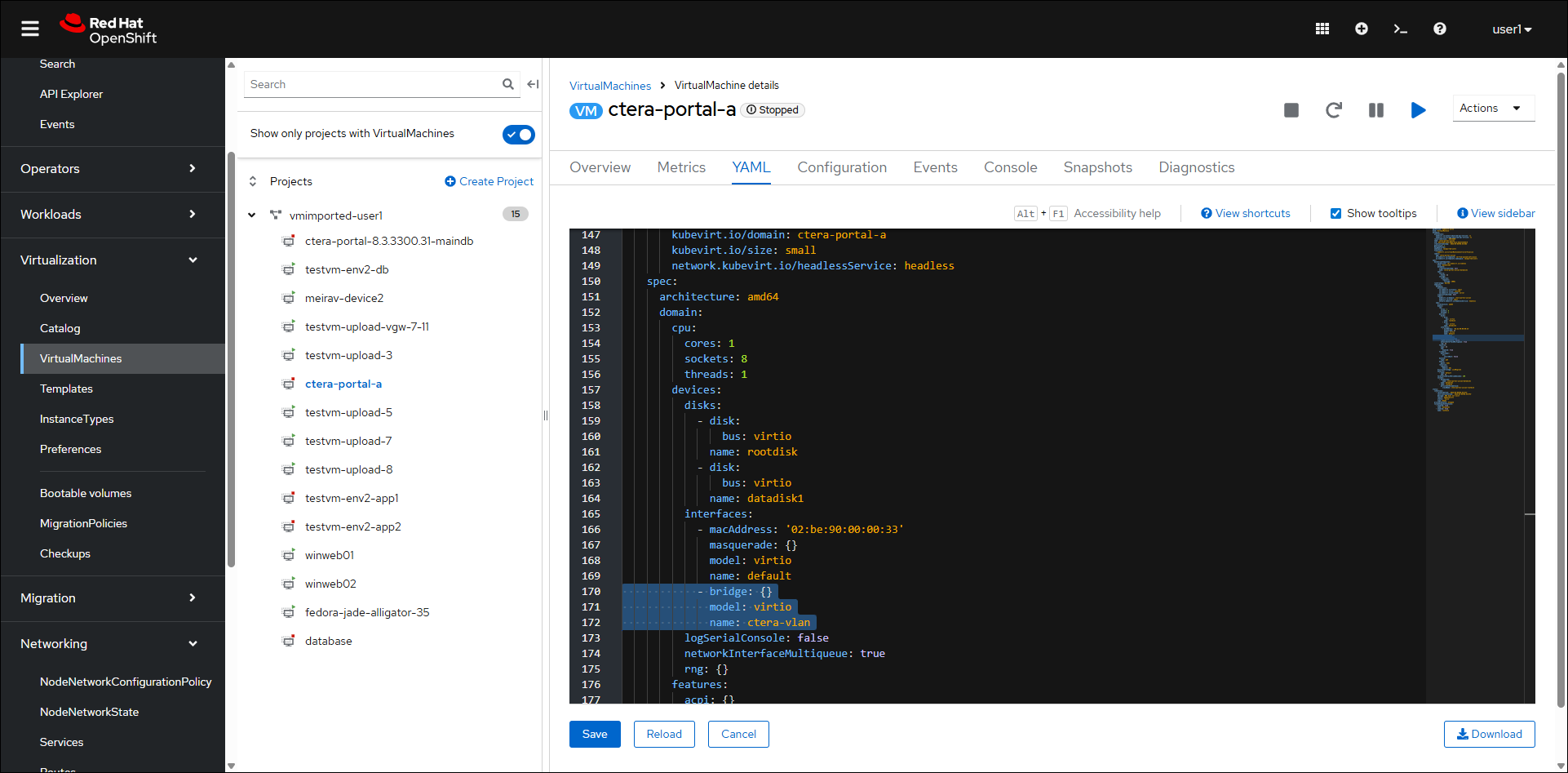

- Click the YAML tab.

- Add the following highlighted text under Interfaces.

- bridge: {} model: virtio name: ctera-vlan

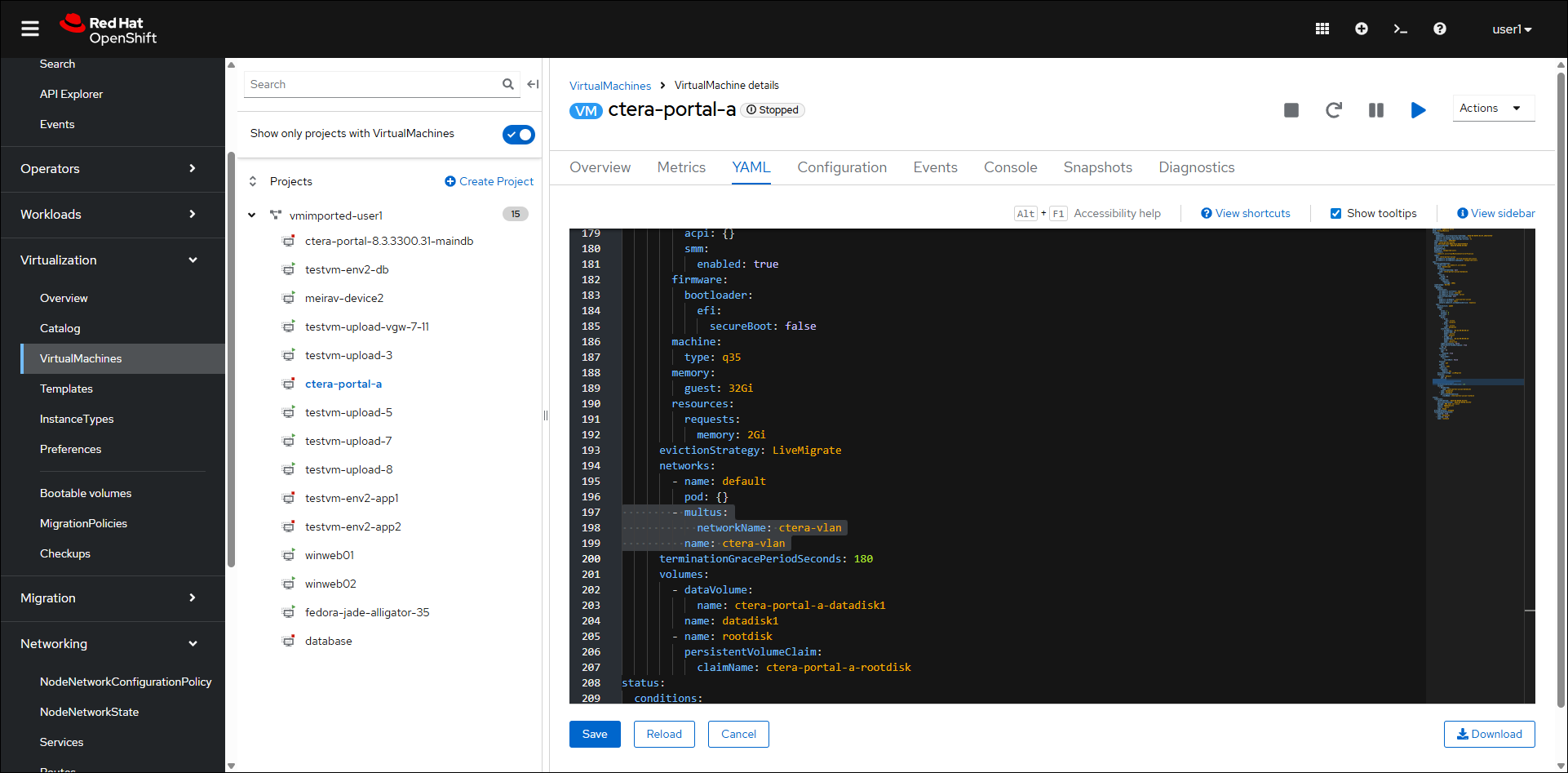

- Add the following highlighted text under networks:

- multus: networkName: ctera-vlan name: ctera-vlan

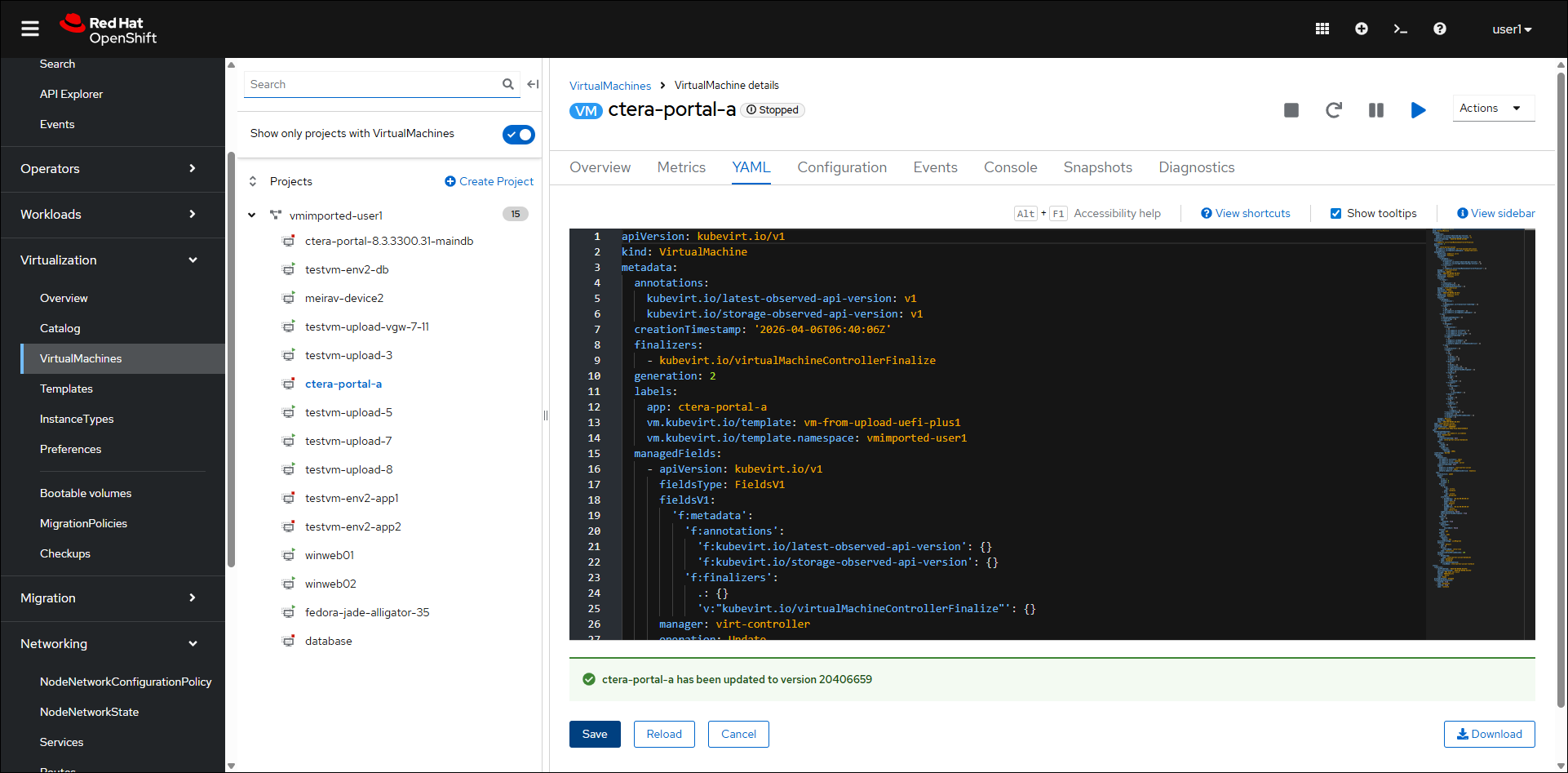

- Click Save.

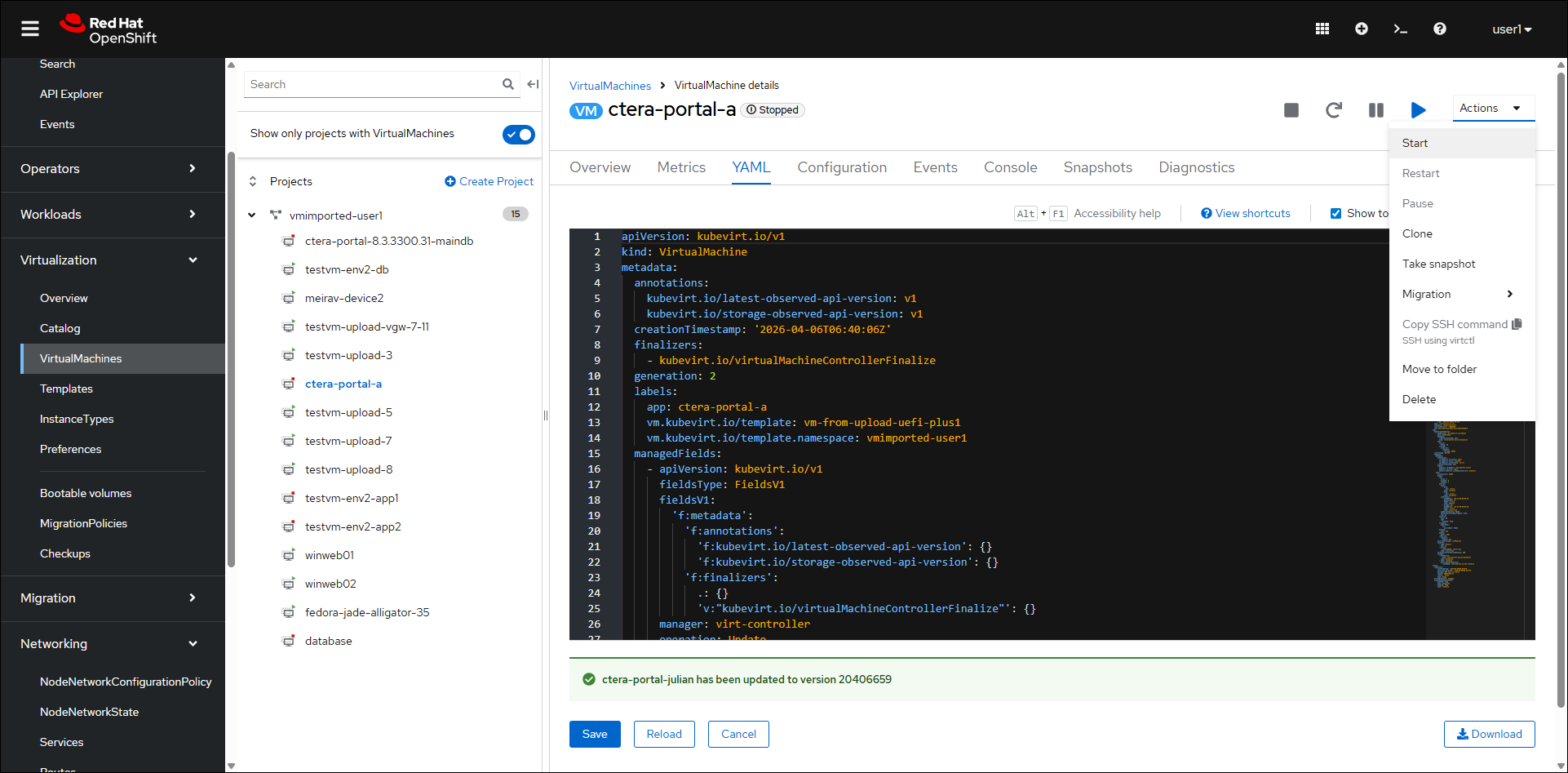

- Click Actions > Start.

Note

NoteThe VM contains two network interfaces:

- eth0 has a fixed IP 10.0.2.2 (nat IP)

- eth1 has an IP from the network, for example 192.168.x.x. This IP is bridged to a physical interface and routable.

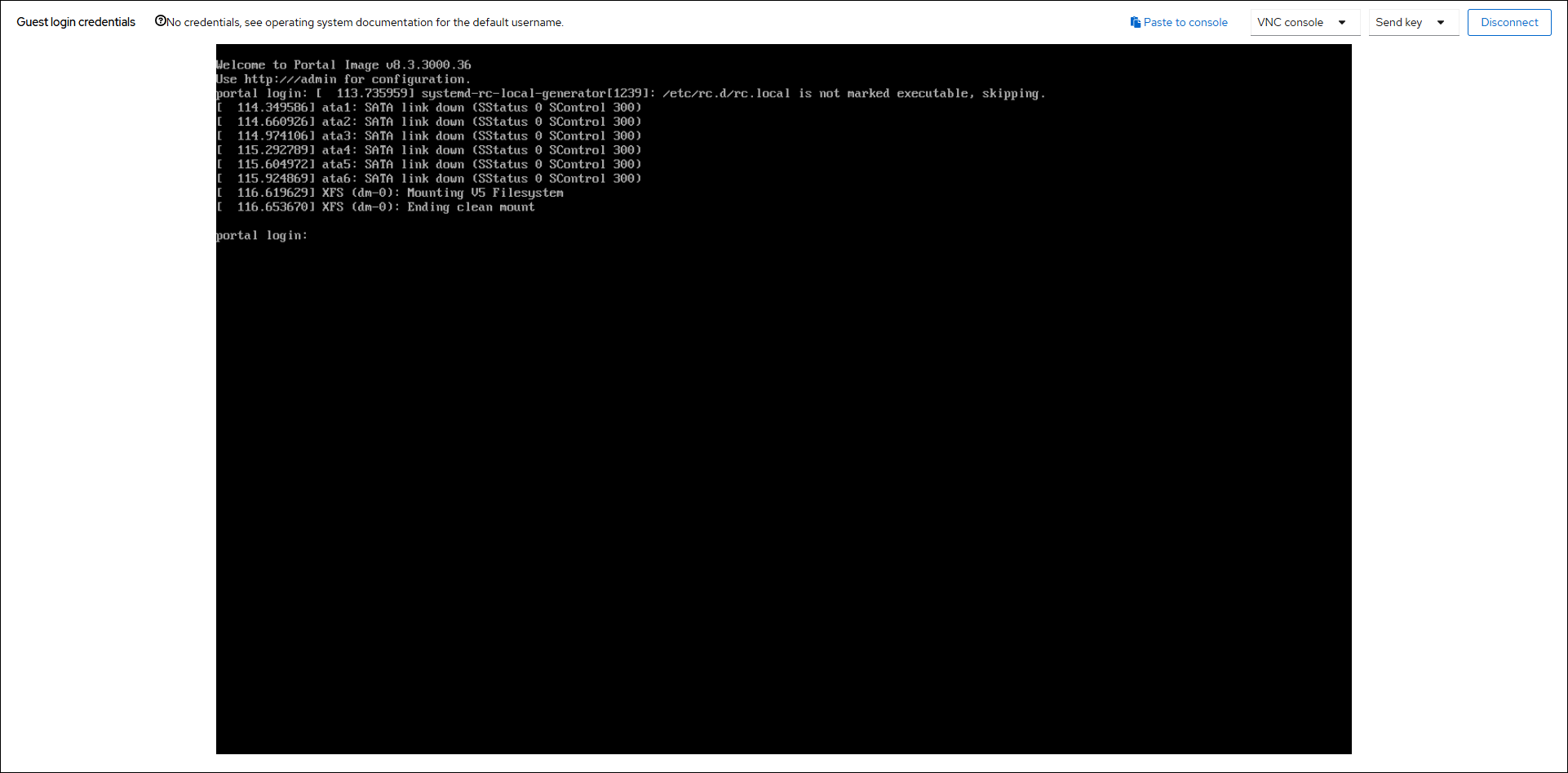

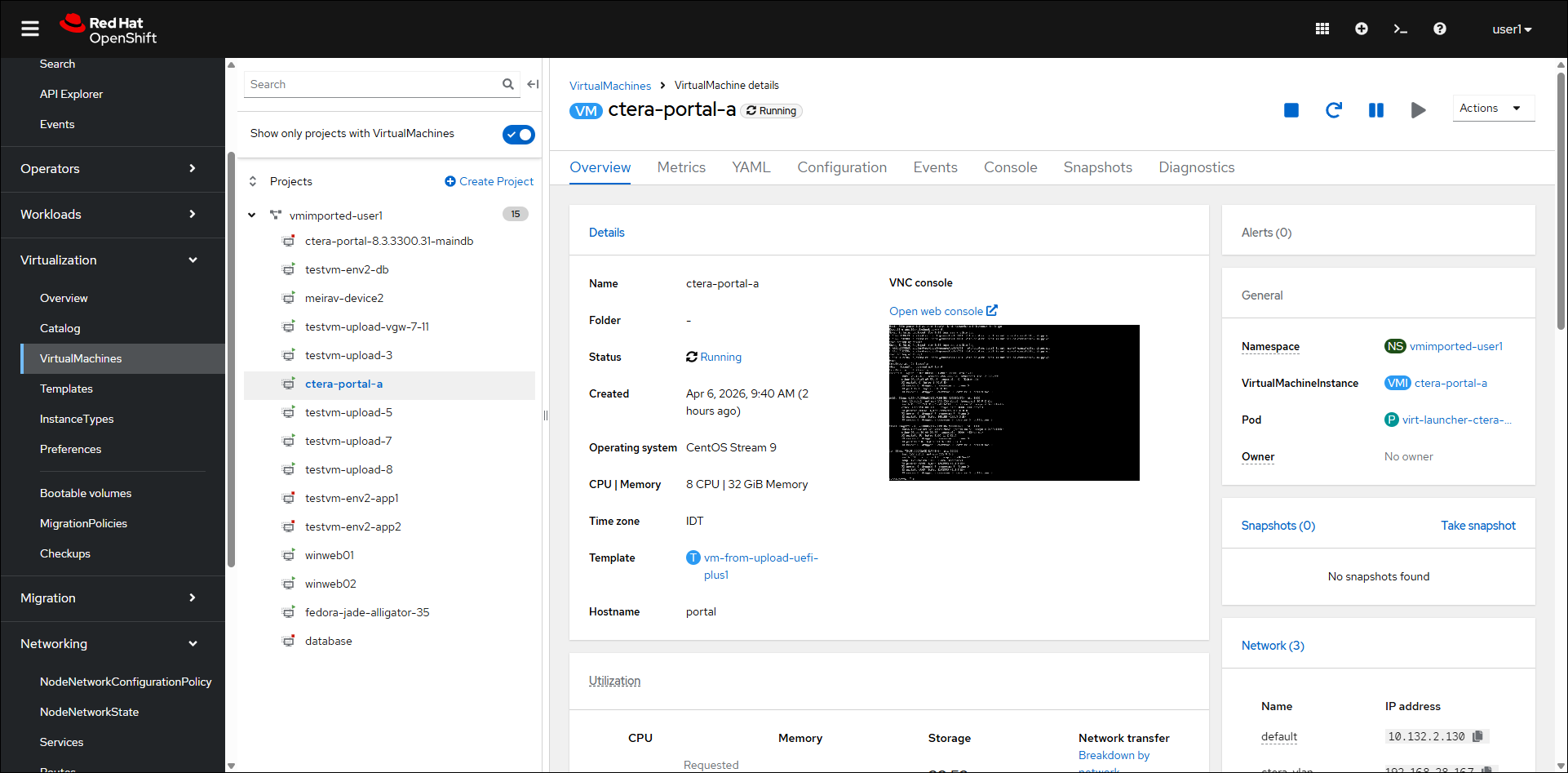

- Click the Console tab or click the Overview tab and then Open web console.

Note

When opening the web console from the Overview tab, the console is displayed in a new brower tab.

- If necessary, click Connect.

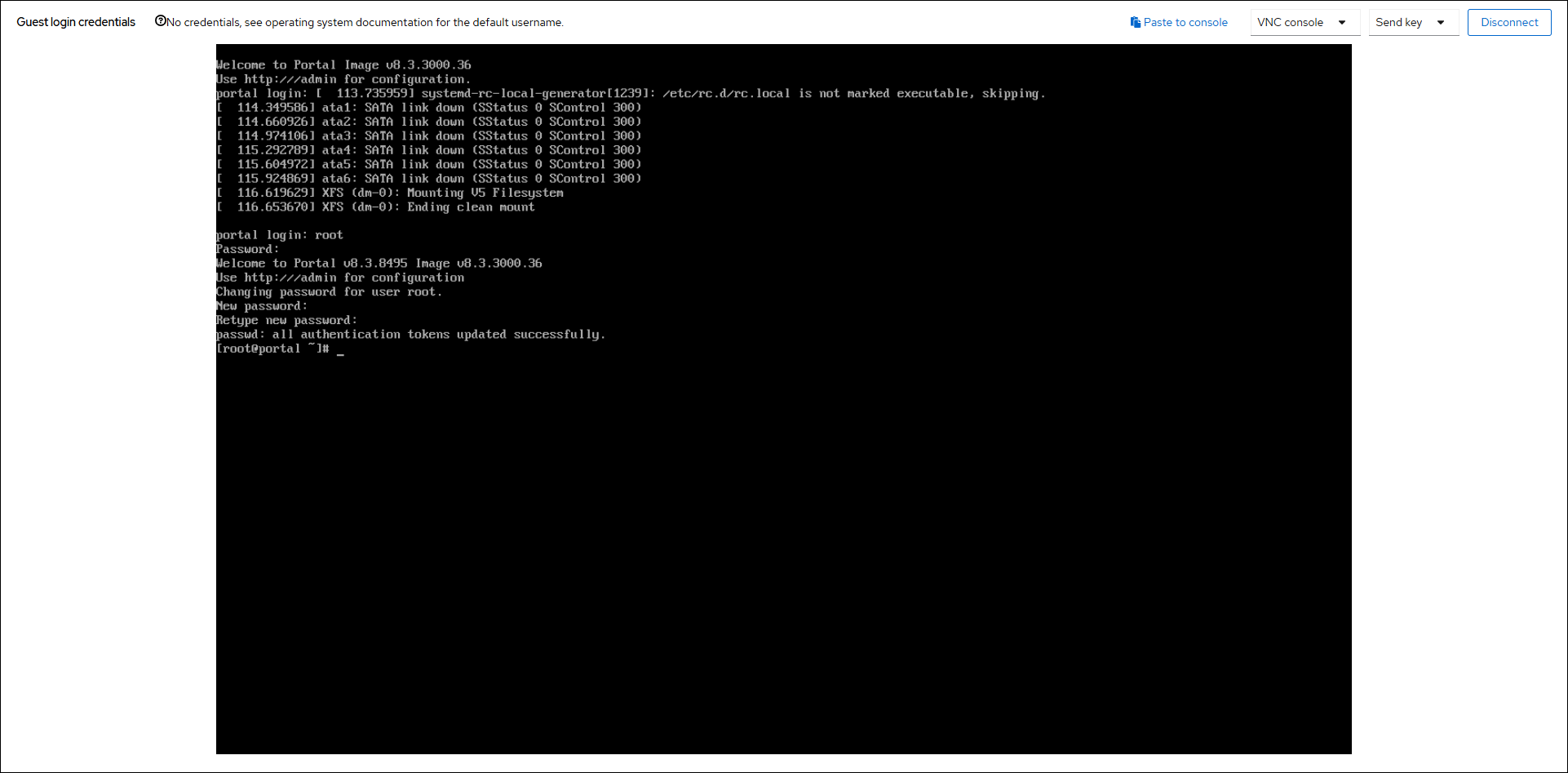

- Log in as

rootusing the default passwordctera321. - You are promptd to change the password.

- Navigate to

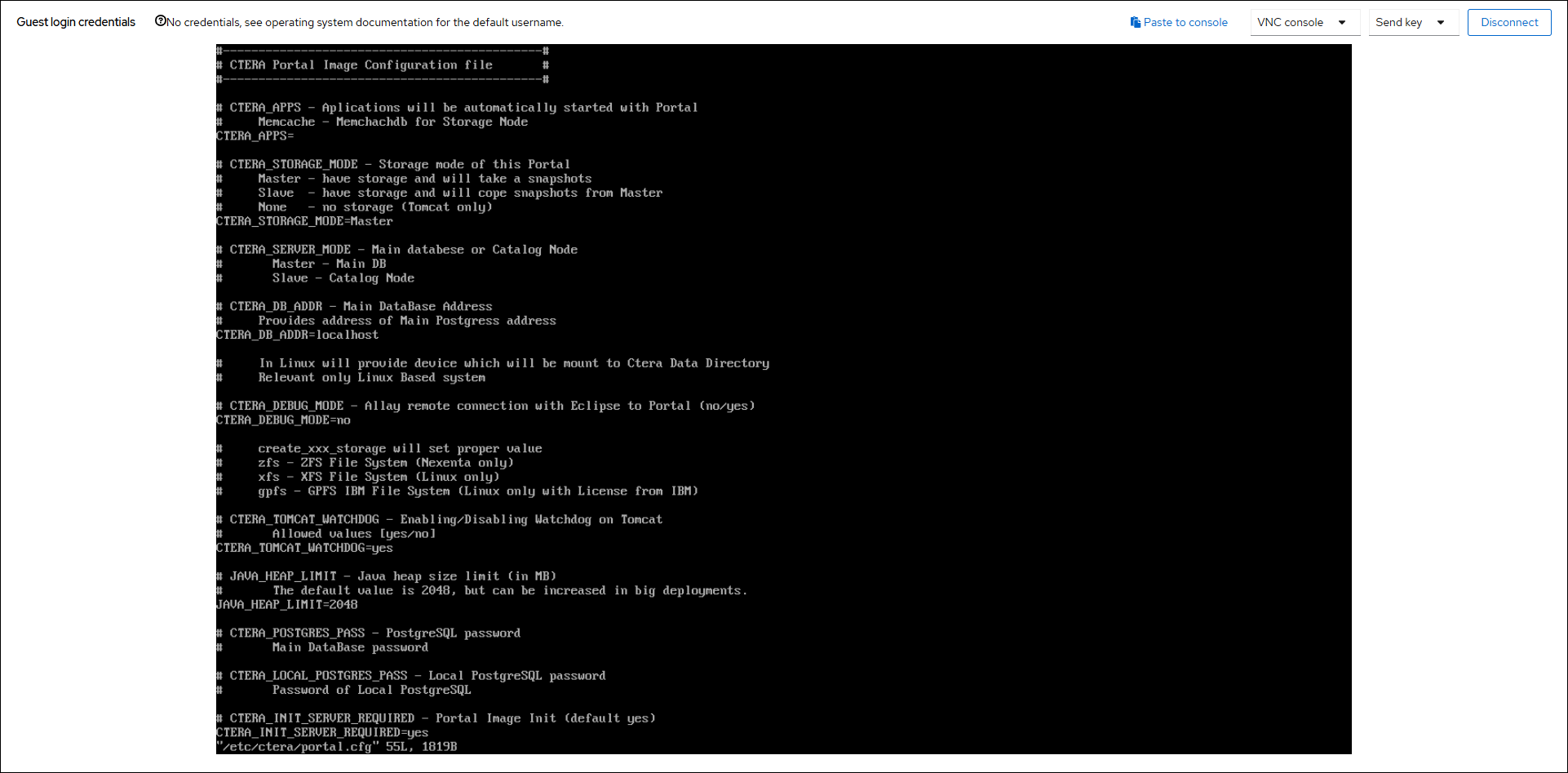

/etc/cteraand open portal.cfg configuration file for editing.NoteYou can also edit portal.cfg directly from any directory by entering

vi $portalcfg

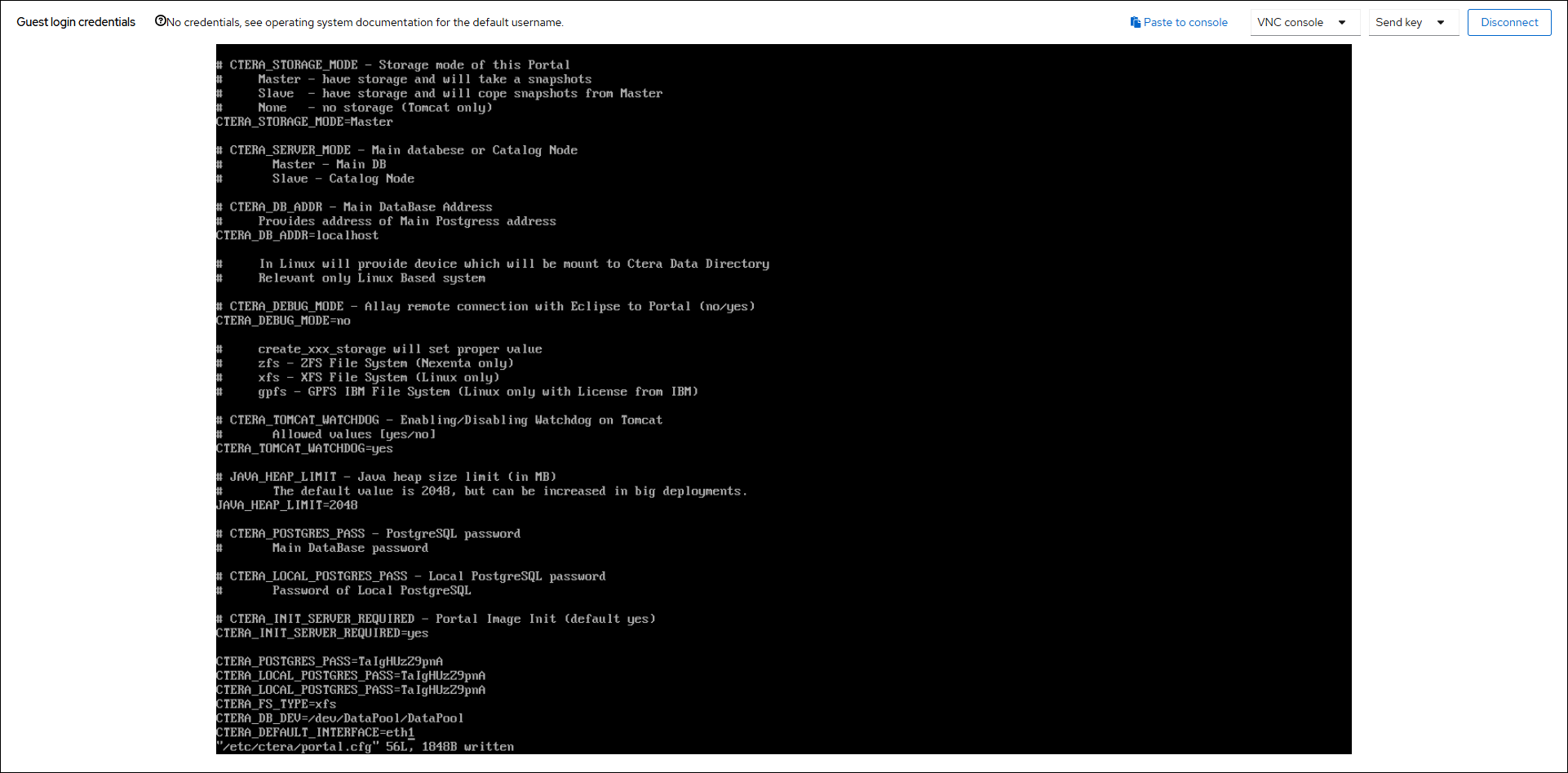

- Add

CTERA_DEFAULT_INTERFACE=eth1to portal.cfg and save the file.

- Run the command

portal.sh restartto restart the portal. - To make sure that the script to create the data pool completed successfully, run

docker imagesto check that the docker images are available, including zookeeper, which is the last docker to load to the data pool.NoteIf the script to create the data pool does not successfully run, it will start on every boot until it completes. The script has a timeout which means it will exit if the data pool is not created within the timeout after boot time. If the data pool is not created, dockers required by the portal are not loaded to the data pool.

If all the dockers do not load you need to run the script

/usr/bin/ctera_firstboot.shAlso, refer to Troubleshooting the Installation if the script does not complete successfully.

- Continue with Create the Archive Storage.

- You can assign a static IP, as described in configure network settings.

Note

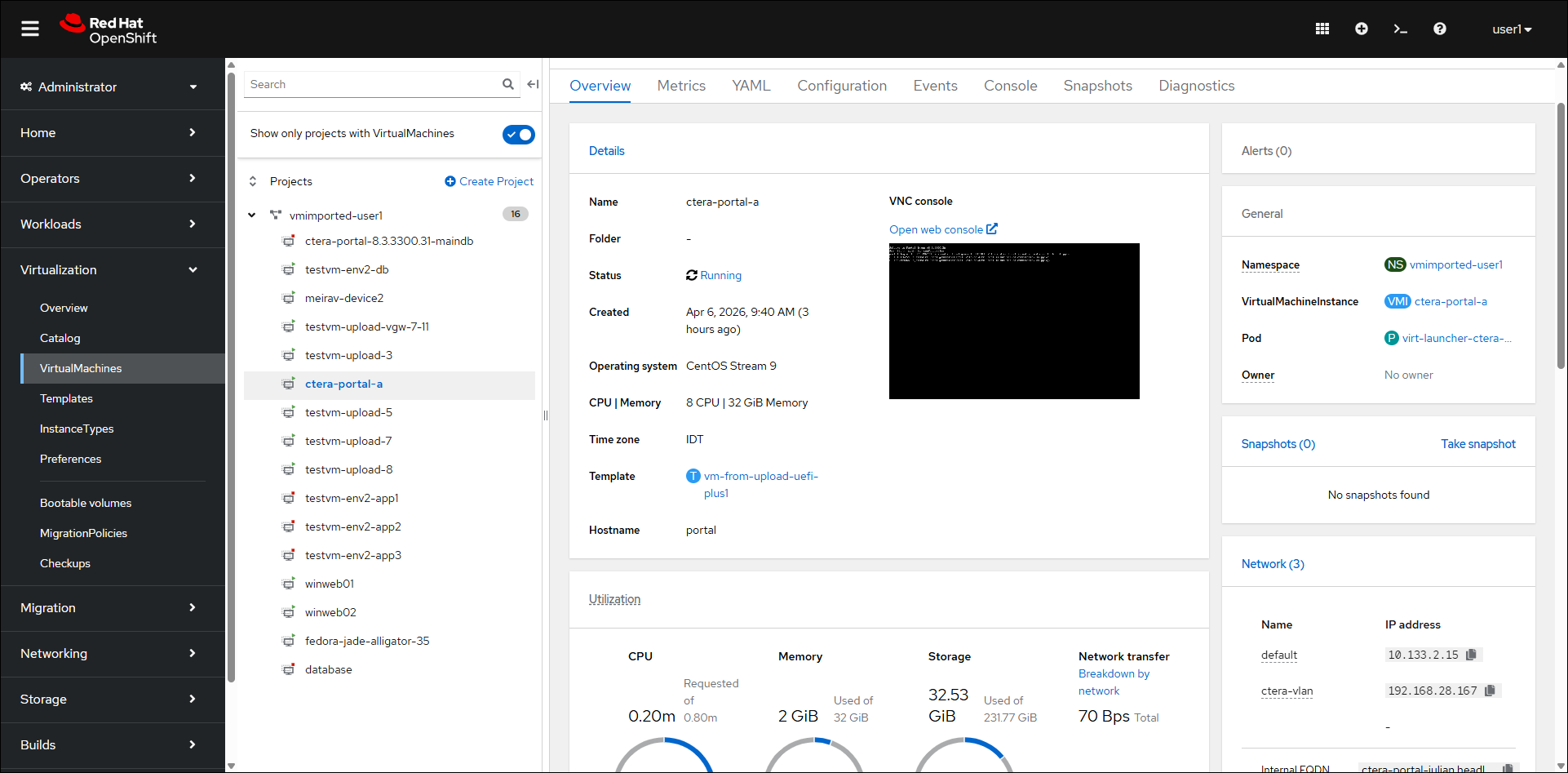

The VM is assigned an IP you can use, which is displayed in the VM Overview under the Network panel for the network name set up by the Red Hat OpenShift administrator and added to the YAML, in this screenshot, the

ctera-vlannetwork.

- Start CTERA Portal services, by running the following command:

portal-manage.sh start

Do not start the portal until both the sdconv and envoy dockers have been loaded to the data pool. You can check that these dockers have loaded in /var/log/ctera_firstboot.log or by running docker images

Create the Archive Storage

You need to create the archive pool on the primary database server and when PostgreSQL streaming replication is required, also on the secondary, replication database, server. See Using PostgreSQL Streaming Replication for details about PostgreSQL streaming replication.

To create the archive pool:

- Log in to the Red Hat OpenShift console.

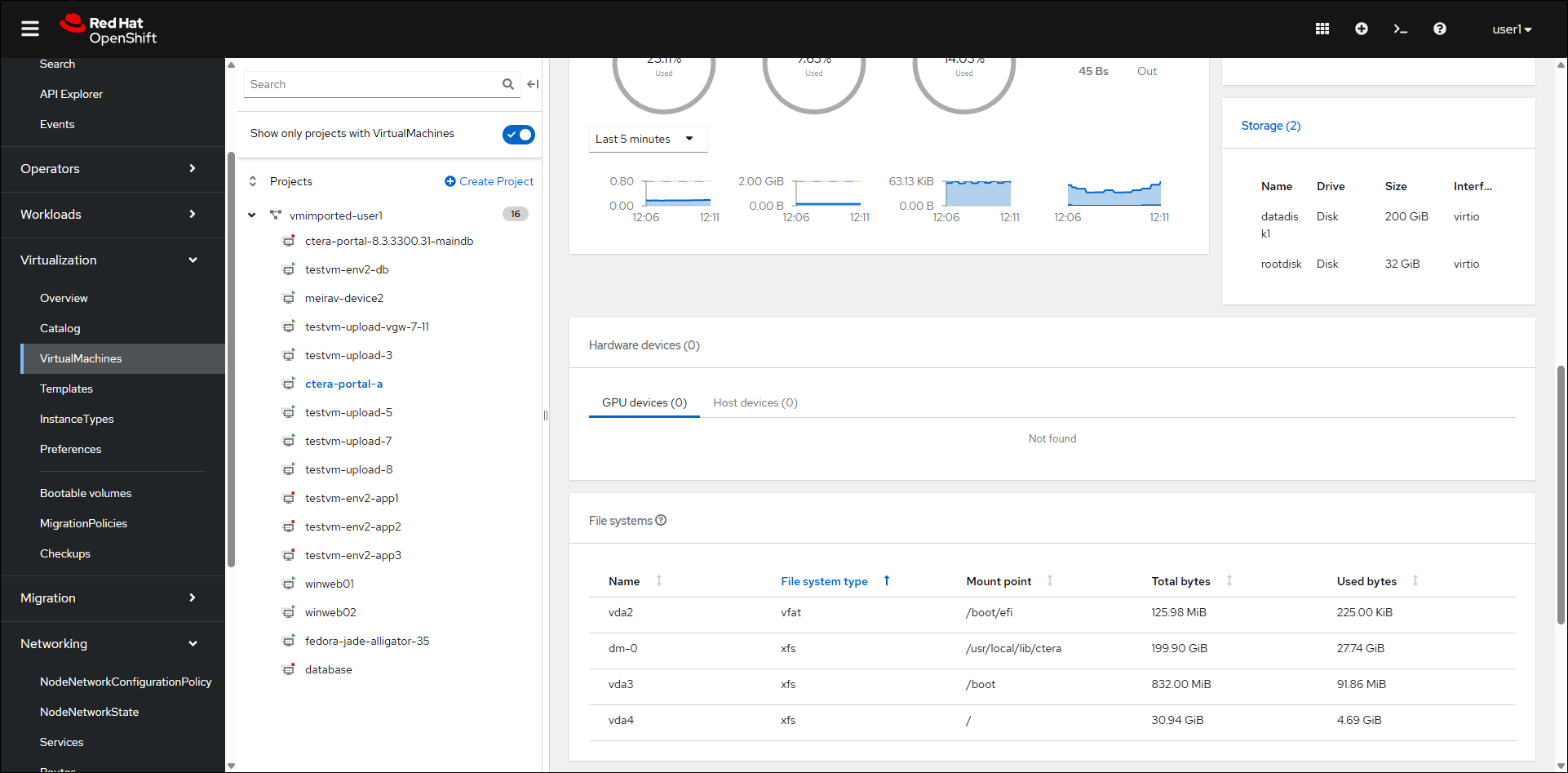

- Click Virtualization > VirtualMachines in the navigation pane and select the CTERA Portal VM.

The Overview panel for the VM is displayed.

- Scroll down to display the Storage panel.

- Click Storage (2).

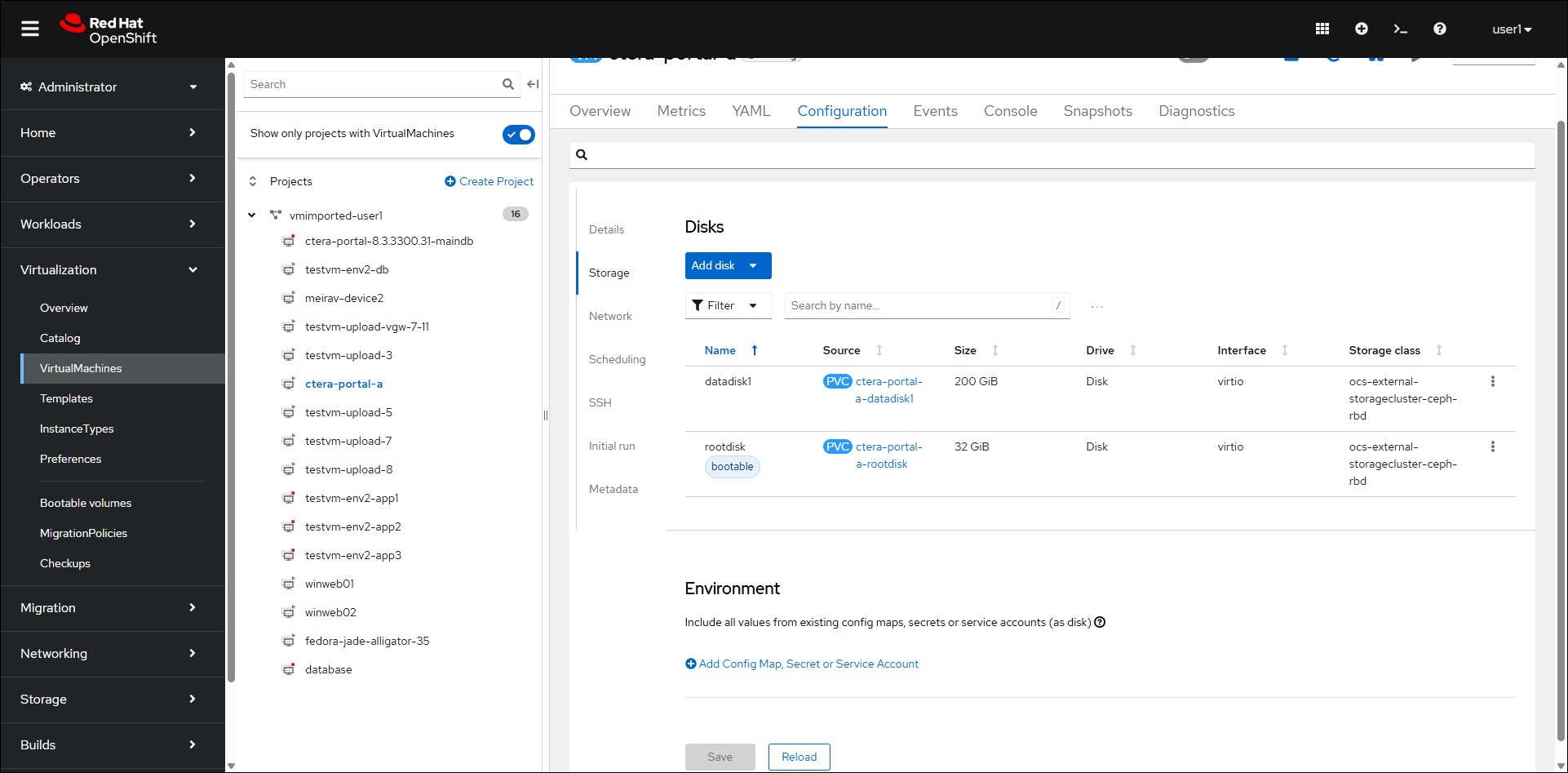

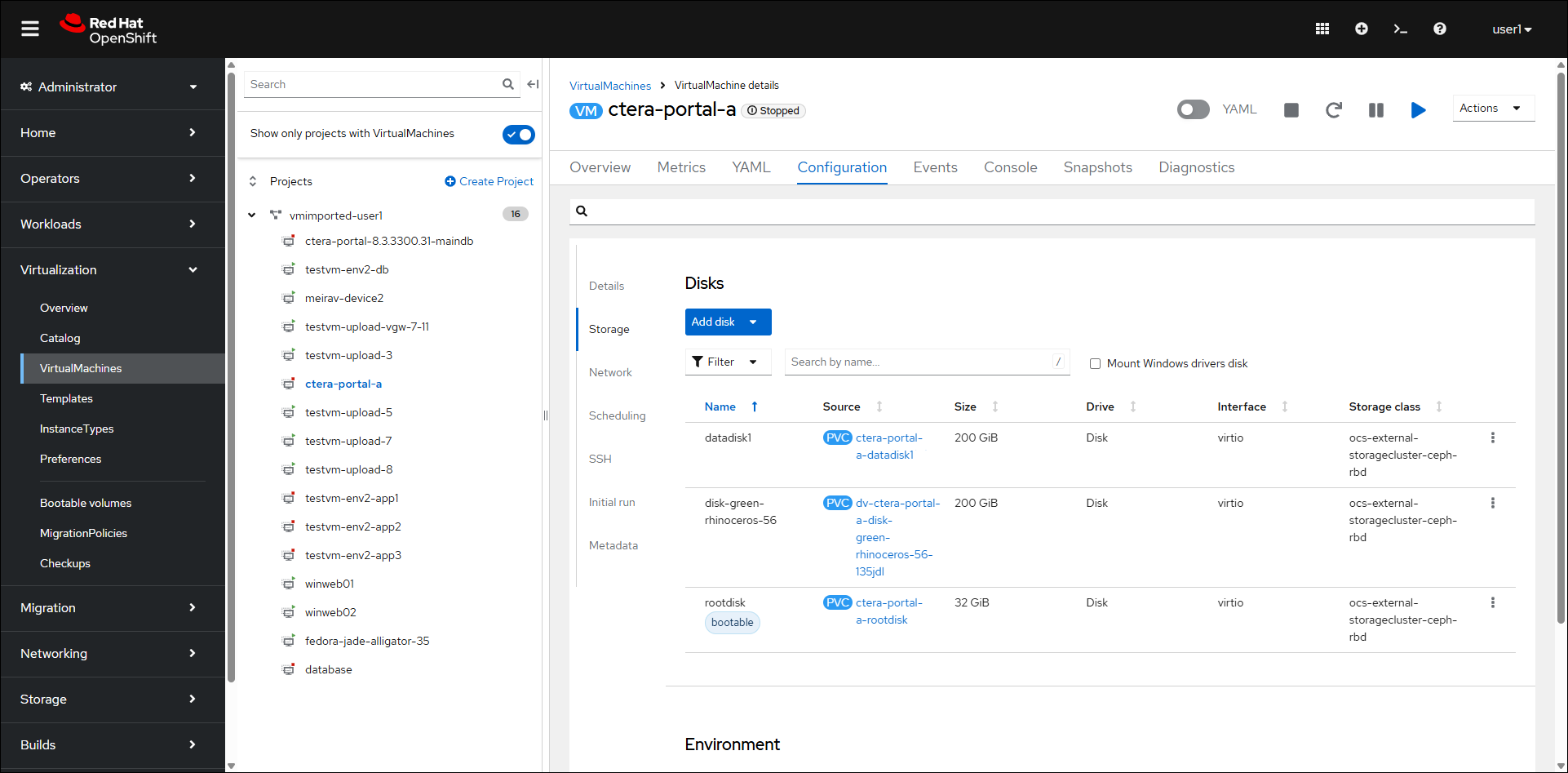

The Configuration > Disks panel is displayed.

- Click Actions > Stop.

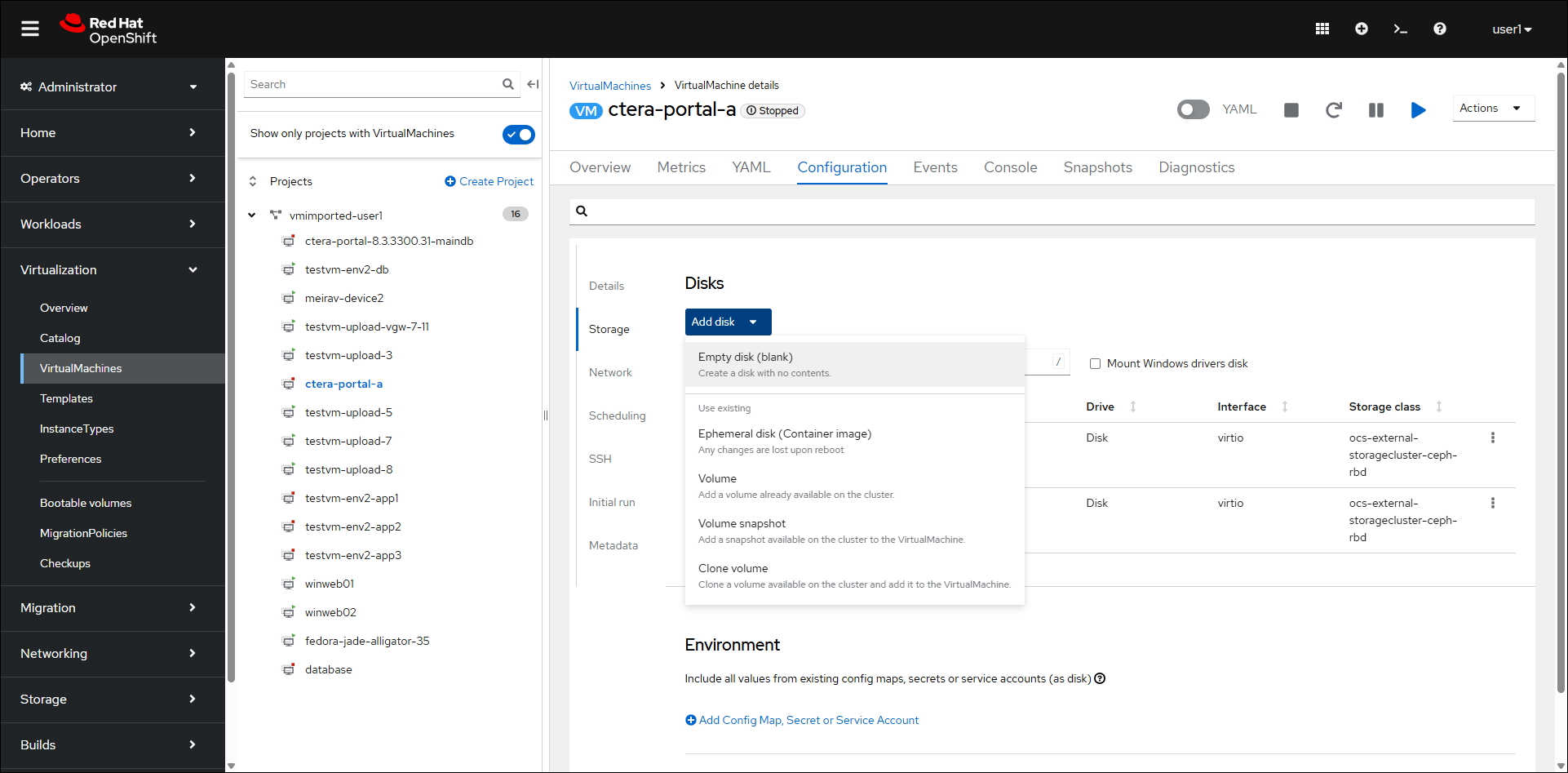

- Click Add disk.

- Select Empty disk (blank).

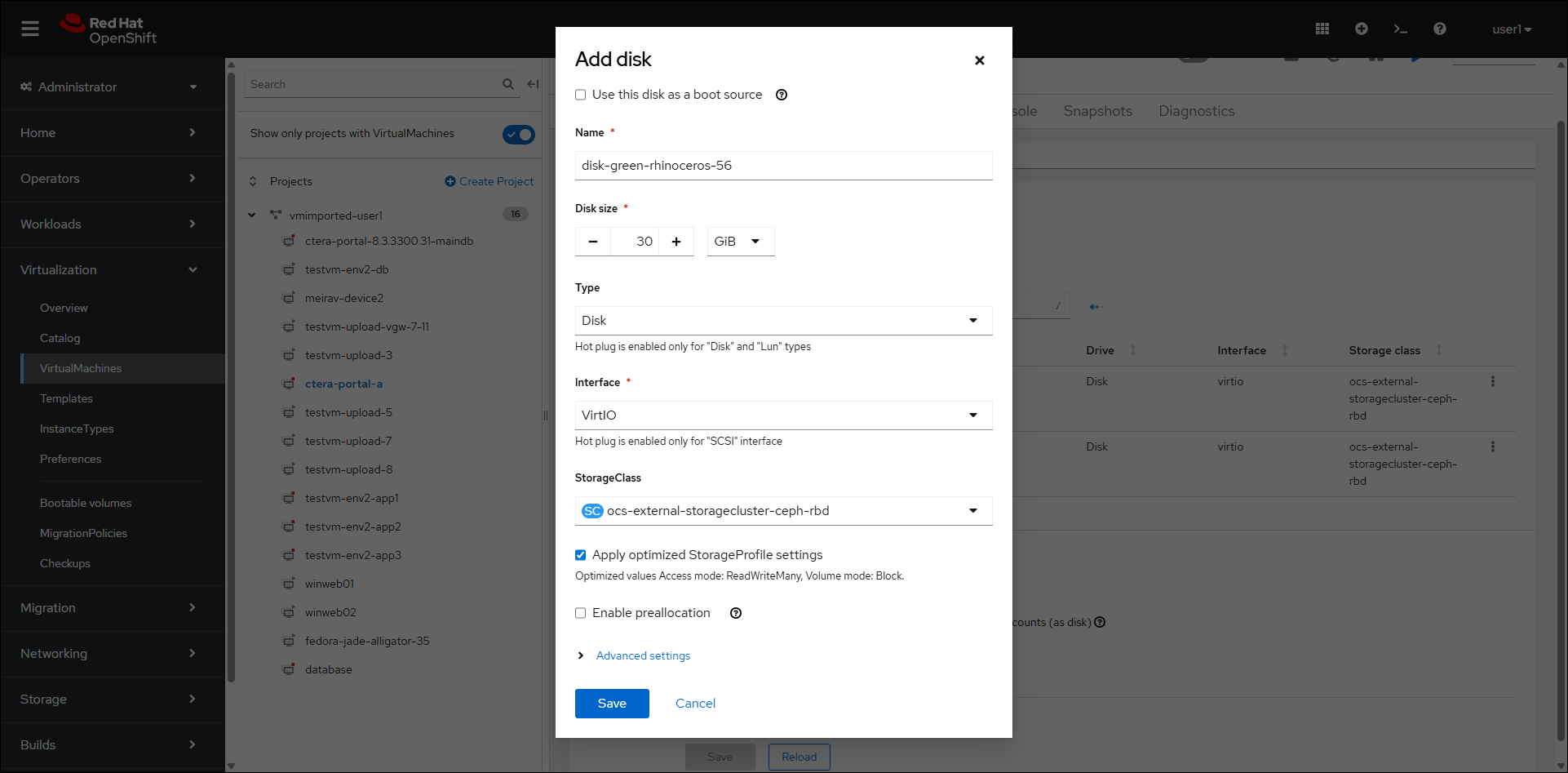

- Specify the Disk size.

Note

The minimum archive pool should be 200GB but it should be sized around 2% of the expected global file system size. For more details, see Requirements.

- Speify the StorageClass and click Save.

- Click Actions > Start.

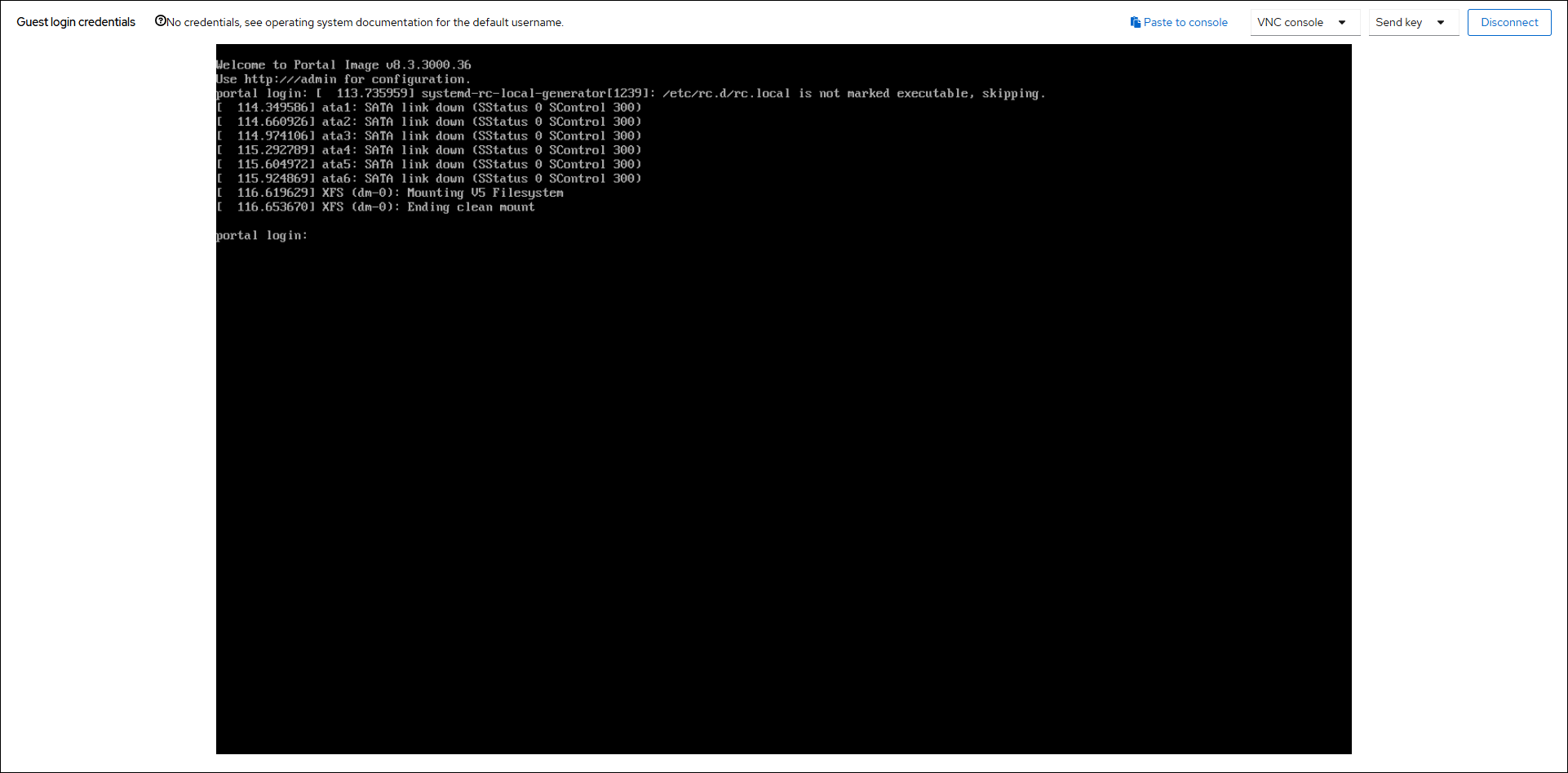

- Click the Console tab or click the Overview tab and then Open web console.

Note

When opening the web console from the Overview tab, the console is displayed in a new brower tab.

- If necessary, click Connect.

- Log in as

rootusing the default passwordctera321. - Run

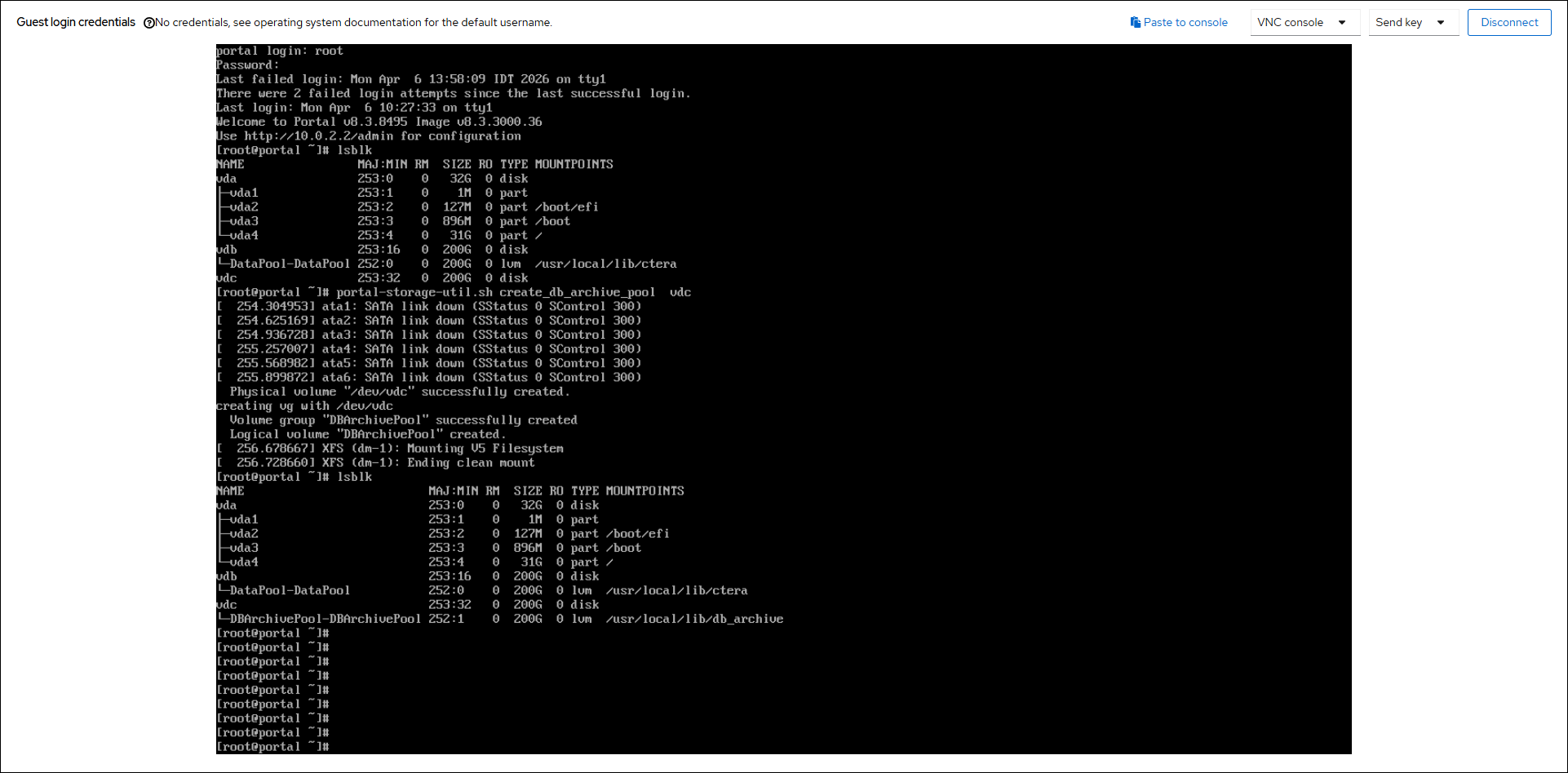

lsblkorfdisk -lto identify the disk to use for the data and archive pool. - Run the following command to create the archive pool:

portal-storage-util.sh create_db_archive_pool Device

where Device is the device name of the disk to use for the archive pool.

For example:portal-storage-util.sh create_db_archive_pool vdc

This command creates both a logical volume and an LVM volume group using the specified device. Therefore, multiple devices can be specified if desired. For example:portal-storage-util.sh create_db_archive_pool vdc vdd vde

Troubleshooting the Installation

You can check on the progress of the docker loads in one of the following ways to ensure that all the dockers are loaded: The last docker to load is called zookeeper:

- In

/var/log/ctera_firstboot.log - By running

docker imagesto check that the docker images are available. - By checking if

/var/lib/ctera_firstboot_completedis present with the date and time when the installation was performed.

If all the dockers do not load you need to run the script /usr/bin/ctera_firstboot.sh